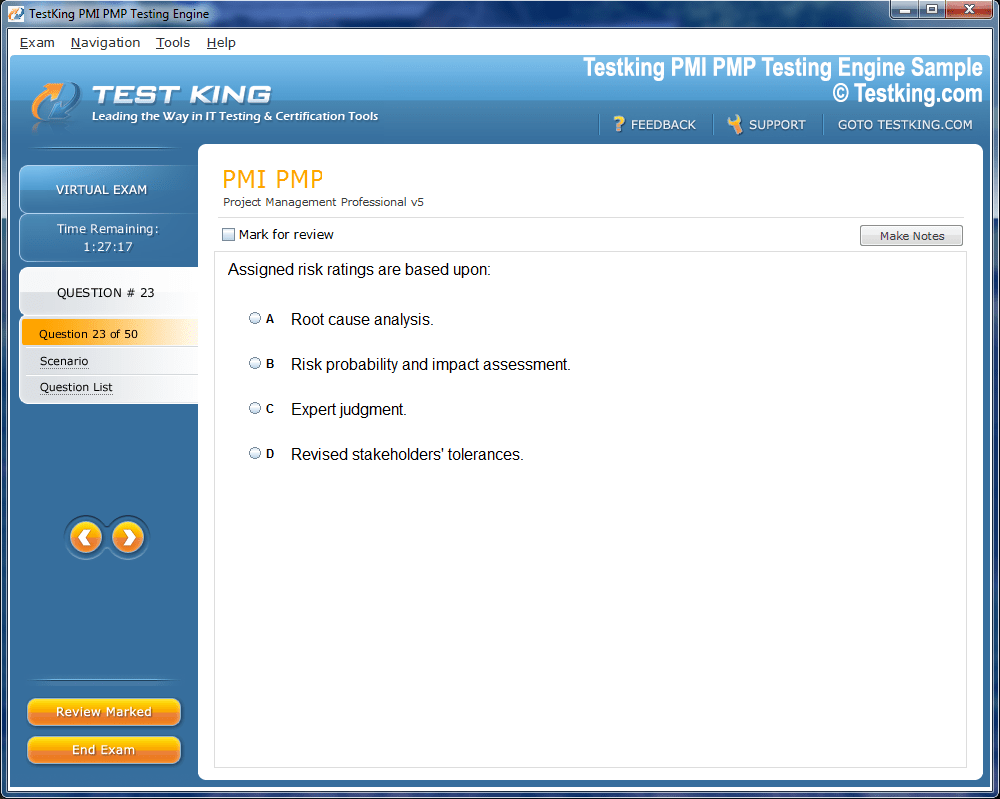

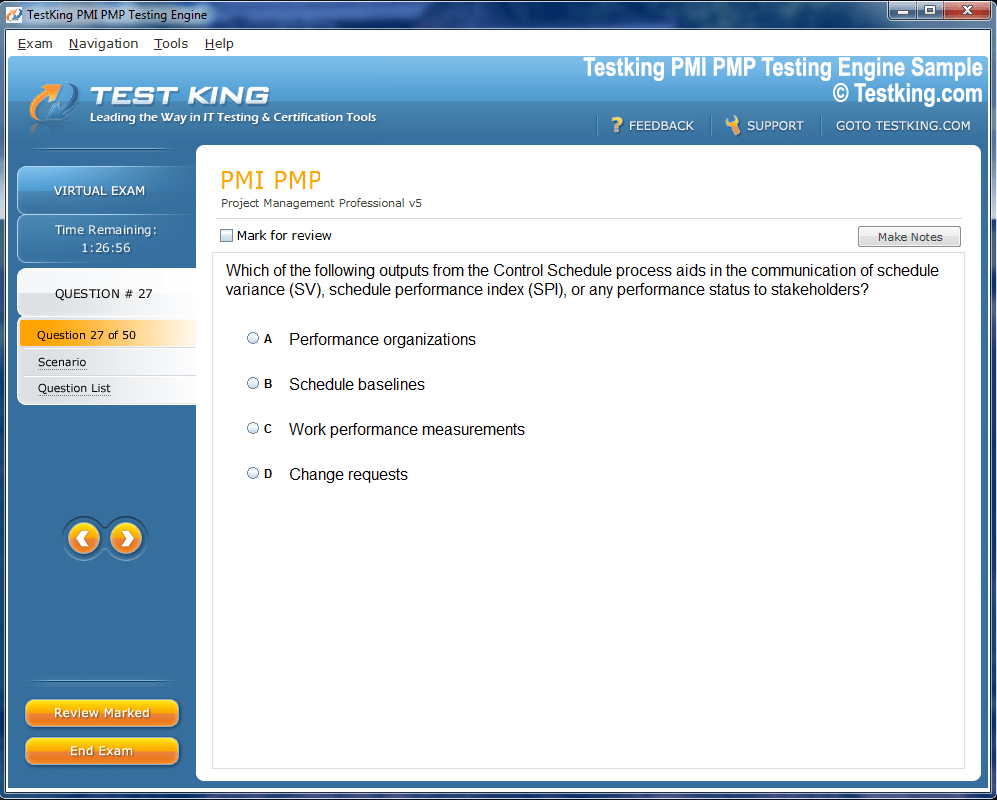

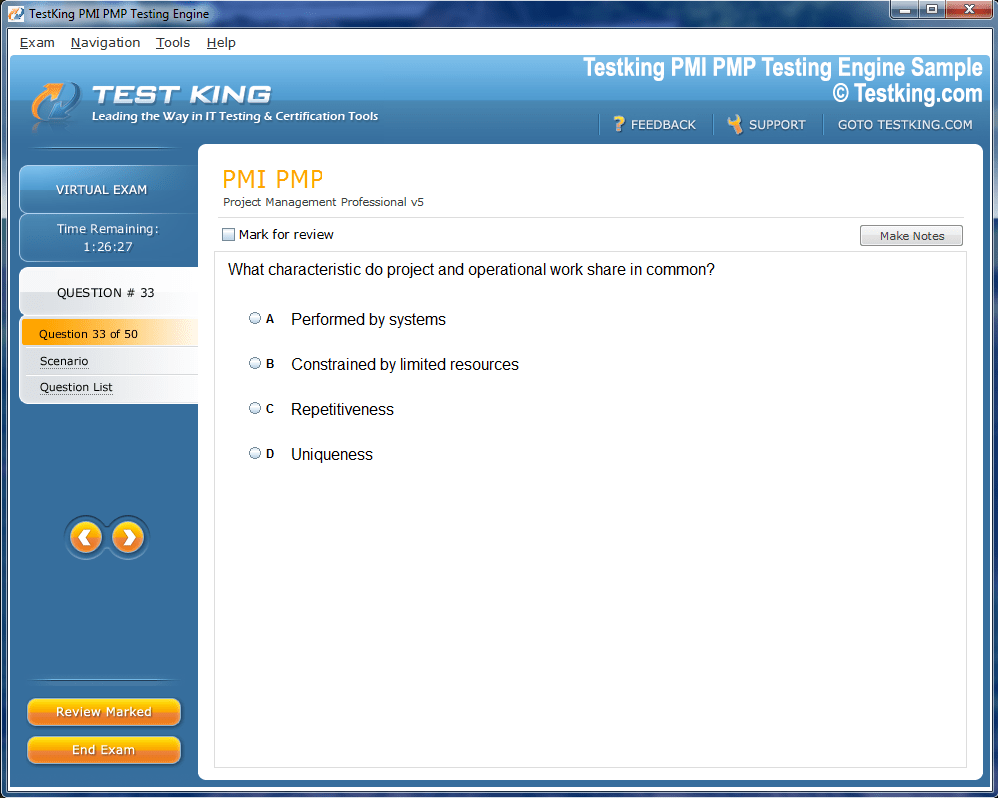

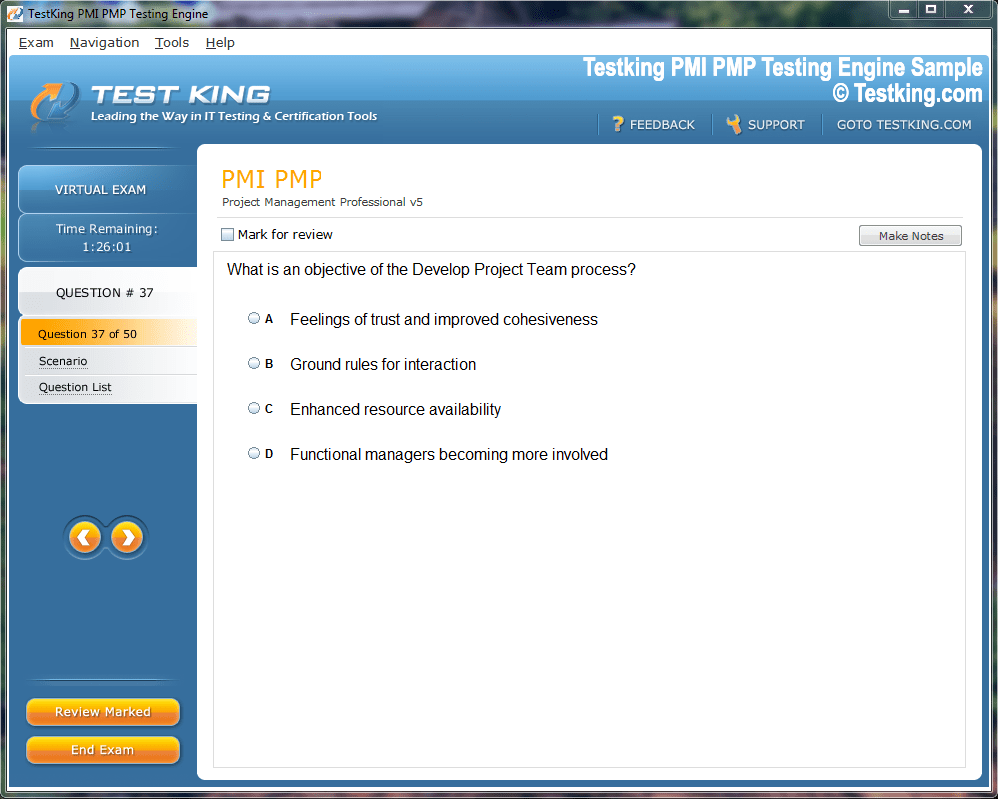

Product Screenshots

Frequently Asked Questions

Where can I download my products after I have completed the purchase?

Your products are available immediately after you have made the payment. You can download them from your Member's Area. Right after your purchase has been confirmed, the website will transfer you to Member's Area. All you will have to do is login and download the products you have purchased to your computer.

How long will my product be valid?

All Testking products are valid for 90 days from the date of purchase. These 90 days also cover updates that may come in during this time. This includes new questions, updates and changes by our editing team and more. These updates will be automatically downloaded to computer to make sure that you get the most updated version of your exam preparation materials.

How can I renew my products after the expiry date? Or do I need to purchase it again?

When your product expires after the 90 days, you don't need to purchase it again. Instead, you should head to your Member's Area, where there is an option of renewing your products with a 30% discount.

Please keep in mind that you need to renew your product to continue using it after the expiry date.

How many computers I can download Testking software on?

You can download your Testking products on the maximum number of 2 (two) computers/devices. To use the software on more than 2 machines, you need to purchase an additional subscription which can be easily done on the website. Please email support@testking.com if you need to use more than 5 (five) computers.

What operating systems are supported by your Testing Engine software?

Our GH-300 testing engine is supported by all modern Windows editions, Android and iPhone/iPad versions. Mac and IOS versions of the software are now being developed. Please stay tuned for updates if you're interested in Mac and IOS versions of Testking software.

Top Microsoft Exams

- AZ-104 - Microsoft Azure Administrator

- AI-900 - Microsoft Azure AI Fundamentals

- DP-700 - Implementing Data Engineering Solutions Using Microsoft Fabric

- AZ-305 - Designing Microsoft Azure Infrastructure Solutions

- AI-102 - Designing and Implementing a Microsoft Azure AI Solution

- PL-300 - Microsoft Power BI Data Analyst

- SC-300 - Microsoft Identity and Access Administrator

- AZ-900 - Microsoft Azure Fundamentals

- SC-200 - Microsoft Security Operations Analyst

- MD-102 - Endpoint Administrator

- MS-102 - Microsoft 365 Administrator

- AB-100 - Agentic AI Business Solutions Architect

- AB-730 - AI Business Professional

- AB-731 - AI Transformation Leader

- DP-600 - Implementing Analytics Solutions Using Microsoft Fabric

- SC-401 - Administering Information Security in Microsoft 365

- AZ-700 - Designing and Implementing Microsoft Azure Networking Solutions

- SC-100 - Microsoft Cybersecurity Architect

- AZ-500 - Microsoft Azure Security Technologies

- AB-900 - Microsoft 365 Copilot and Agent Administration Fundamentals

- SC-900 - Microsoft Security, Compliance, and Identity Fundamentals

- AZ-204 - Developing Solutions for Microsoft Azure

- PL-200 - Microsoft Power Platform Functional Consultant

- AZ-140 - Configuring and Operating Microsoft Azure Virtual Desktop

- AZ-400 - Designing and Implementing Microsoft DevOps Solutions

- PL-400 - Microsoft Power Platform Developer

- GH-300 - GitHub Copilot

- AZ-800 - Administering Windows Server Hybrid Core Infrastructure

- AZ-801 - Configuring Windows Server Hybrid Advanced Services

- PL-600 - Microsoft Power Platform Solution Architect

- PL-900 - Microsoft Power Platform Fundamentals

- MS-900 - Microsoft 365 Fundamentals

- DP-300 - Administering Microsoft Azure SQL Solutions

- MB-800 - Microsoft Dynamics 365 Business Central Functional Consultant

- MS-700 - Managing Microsoft Teams

- MB-310 - Microsoft Dynamics 365 Finance Functional Consultant

- MB-280 - Microsoft Dynamics 365 Customer Experience Analyst

- DP-900 - Microsoft Azure Data Fundamentals

- MB-330 - Microsoft Dynamics 365 Supply Chain Management

- DP-100 - Designing and Implementing a Data Science Solution on Azure

- MB-230 - Microsoft Dynamics 365 Customer Service Functional Consultant

- MS-721 - Collaboration Communications Systems Engineer

- MB-820 - Microsoft Dynamics 365 Business Central Developer

- MB-335 - Microsoft Dynamics 365 Supply Chain Management Functional Consultant Expert

- GH-900 - GitHub Foundations

- MB-500 - Microsoft Dynamics 365: Finance and Operations Apps Developer

- GH-200 - GitHub Actions

- PL-500 - Microsoft Power Automate RPA Developer

- AI-300 - Operationalizing Machine Learning and Generative AI Solutions

- DP-420 - Designing and Implementing Cloud-Native Applications Using Microsoft Azure Cosmos DB

- MB-700 - Microsoft Dynamics 365: Finance and Operations Apps Solution Architect

- GH-500 - GitHub Advanced Security

- MB-240 - Microsoft Dynamics 365 for Field Service

- AZ-120 - Planning and Administering Microsoft Azure for SAP Workloads

- DP-203 - Data Engineering on Microsoft Azure

- SC-400 - Microsoft Information Protection Administrator

- GH-100 - GitHub Administration

- MB-920 - Microsoft Dynamics 365 Fundamentals Finance and Operations Apps (ERP)

- 98-382 - Introduction to Programming Using JavaScript

- AZ-303 - Microsoft Azure Architect Technologies

- MB-910 - Microsoft Dynamics 365 Fundamentals Customer Engagement Apps (CRM)

- 98-367 - Security Fundamentals

- 98-375 - HTML5 App Development Fundamentals

- 62-193 - Technology Literacy for Educators

- MO-200 - Microsoft Excel (Excel and Excel 2019)

- 98-383 - Introduction to Programming Using HTML and CSS

Microsoft GH-300 Strategies for Responsible AI and GitHub Copilot

The Microsoft GH-300 represents a pivotal moment in artificial intelligence infrastructure, combining unprecedented computational power with a framework designed for responsible deployment. This specialized hardware architecture emerges at a time when organizations face mounting pressure to balance innovation velocity with ethical considerations. The chip's design philosophy reflects a fundamental shift in how technology companies approach AI development, embedding safeguards and monitoring capabilities directly into silicon rather than treating them as afterthoughts.

Understanding this convergence requires examining how hardware choices influence algorithmic behavior and deployment patterns. The GH-300's architecture enables real-time monitoring of model behavior, resource consumption, and inference patterns that were previously invisible to operators. Organizations investing in IT and tech podcasts often discover these nuanced relationships between hardware capabilities and responsible AI practices. This integration creates opportunities for proactive governance rather than reactive compliance, fundamentally changing how teams think about AI system design.

GitHub Copilot as a Testing Ground for Responsible AI Principles

GitHub Copilot serves as one of the most visible implementations of AI assistance technology, touching millions of developers daily through code suggestions and completion. This widespread deployment makes it an ideal case study for responsible AI implementation, where theoretical principles meet practical challenges at scale. The system must balance competing demands including accuracy, speed, intellectual property concerns, security implications, and user autonomy in ways that inform broader AI governance strategies.

The platform's evolution demonstrates how responsible AI principles translate into product features and operational constraints. Engineers working on Copilot face constant decisions about suggestion filtering, attribution mechanisms, and transparency layers that reveal model confidence levels. Professionals transitioning into beginner-friendly roles hiring often encounter these systems first-hand, making their design choices particularly consequential for shaping industry norms and expectations around AI-assisted workflows.

Architectural Foundations of the GH-300 Platform

The GH-300's technical specifications reveal deliberate design choices favoring responsible AI deployment over raw performance maximization. Unlike previous generations focused primarily on inference speed and throughput, this architecture incorporates dedicated circuits for lineage tracking, bias detection, and output verification. These components operate in parallel with primary computation pathways, creating minimal latency overhead while providing continuous behavioral monitoring that supports compliance and quality assurance objectives.

Memory architecture decisions further reinforce responsible AI priorities through segmented access patterns and configurable retention policies. The chip implements hardware-enforced data isolation that prevents cross-contamination between training datasets and inference workloads, addressing a persistent challenge in multi-tenant AI systems. Technical professionals entering through help desk profession pathways benefit from understanding these architectural patterns, as they increasingly support systems built on these foundations and must troubleshoot issues arising from their unique characteristics.

Governance Frameworks Enabled by Hardware Capabilities

Hardware capabilities directly shape the governance frameworks that organizations can realistically implement for AI systems. The GH-300's telemetry features enable granular audit trails that capture model inputs, intermediate states, and outputs with timestamps and version markers. This comprehensive logging supports retrospective analysis when questions arise about specific AI decisions, addressing accountability gaps that have plagued earlier implementations where reconstruction of system behavior proved impossible.

These capabilities transform governance from aspirational policy statements into enforceable technical controls with measurable outcomes. Organizations can define acceptable operating parameters and rely on hardware-level enforcement rather than trusting software-layer safeguards that determined actors might circumvent. Teams focused on help desk careers increasingly interact with these governance mechanisms, making their practical understanding essential for effective system administration and user support across enterprise AI deployments.

Bias Detection and Mitigation at the Silicon Level

Traditional bias mitigation strategies operate at the data preprocessing or post-processing stages, introducing latency and complexity that discourage their consistent application. The GH-300 incorporates specialized logic for detecting statistical anomalies and distribution shifts that often signal problematic bias in model outputs. These circuits analyze activation patterns across neural network layers, flagging suspicious concentration or unexpected correlations that merit human review before results propagate to production systems.

This silicon-level intervention creates opportunities for bias detection with negligible performance impact, removing a common objection to rigorous fairness testing. The approach complements rather than replaces algorithmic fairness techniques, providing an additional verification layer that operates independently of model architecture. Customer service professionals learning to translate soft skills into measurable triumphs find these technical safeguards reassuring, knowing that systems incorporate multiple redundant protections against discriminatory outputs that could harm users or violate regulatory requirements.

Energy Efficiency as a Responsibility Dimension

Responsible AI extends beyond algorithmic fairness and transparency to encompass environmental impact through energy consumption. The GH-300's power management subsystems represent significant engineering investment in efficiency, incorporating dynamic voltage scaling, selective computation pathways, and intelligent workload distribution that minimize wasted cycles. These features reflect growing recognition that AI's environmental footprint constitutes a genuine responsibility consideration, not merely an operational cost to be optimized.

Efficiency improvements compound across large-scale deployments, where incremental per-chip savings aggregate to substantial reductions in total energy consumption and carbon emissions. Organizations pursuing sustainability goals increasingly evaluate AI infrastructure through this lens, making energy efficiency a competitive differentiator and procurement criterion. Infrastructure specialists examining AWS solutions architect salaries recognize that expertise in efficient deployment patterns commands premium compensation as environmental responsibility becomes a non-negotiable business requirement rather than optional consideration.

GitHub Copilot's Content Filtering Architecture

Content filtering represents one of Copilot's most complex responsible AI challenges, requiring real-time analysis of suggested code against multiple criteria including security vulnerabilities, license compatibility, and appropriateness. The system employs multi-stage filtering that begins with lightweight pattern matching before escalating to computationally expensive semantic analysis for ambiguous cases. This tiered approach balances thoroughness against latency requirements, ensuring developers receive timely suggestions while maintaining protective screening against problematic content.

Filter design involves constant tension between precision and recall, where overly aggressive filtering frustrates users with excessive false positives while permissive settings allow harmful suggestions to reach developers. Product teams continuously refine these thresholds based on usage telemetry and incident reports, treating filtering as an ongoing optimization challenge rather than a solved problem. Security researchers studying adversarial machine learning understand how sophisticated attackers probe these filters, necessitating regular updates and defensive improvements to maintain protective efficacy against evolving threats.

Transparency Mechanisms in AI-Assisted Development

Transparency in AI systems encompasses multiple dimensions including model provenance, training data composition, confidence indicators, and decision rationale. GitHub Copilot implements transparency through confidence scores attached to suggestions, reference links to similar code in public repositories, and explicit indicators when suggestions derive from specific licensed codebases. These features empower developers to make informed decisions about accepting or modifying AI-generated content based on understanding its origins and reliability.

Implementation challenges arise from balancing transparency against cognitive load, where excessive information overwhelms users and diminishes rather than enhances decision quality. Interface designers carefully calibrate what information appears by default versus what remains accessible through progressive disclosure, recognizing that different users need different transparency levels depending on context. Cloud engineers working with Amazon S3 concepts appreciate similar transparency around data lifecycle and access patterns, demonstrating how transparency principles manifest across various technology domains with common underlying patterns.

Training Data Governance and Quality Assurance

The quality and composition of training data fundamentally determine AI system capabilities and limitations, making data governance central to responsible AI implementation. GitHub Copilot's training leverages public code repositories, raising questions about consent, attribution, and appropriate use that extend beyond simple copyright considerations. Microsoft's approach involves filtering training data to exclude certain licenses, implementing opt-out mechanisms for repository owners, and developing attribution features that acknowledge code origins when suggestions closely match training examples.

These governance mechanisms evolve continuously as legal frameworks develop and community norms shift around acceptable AI training practices. Organizations must balance access to comprehensive training data against respecting contributor preferences and intellectual property rights, navigating tensions without clear precedent or settled law. AI researchers exploring transfer learning foundations encounter similar dilemmas around appropriate knowledge transfer between domains, highlighting how data governance challenges permeate multiple aspects of modern machine learning practice.

User Agency and Control in AI-Assisted Workflows

Preserving user agency represents a core responsible AI principle, ensuring humans remain in control rather than becoming passive recipients of algorithmic decisions. GitHub Copilot's interface design emphasizes this through suggestion presentation that requires explicit acceptance, modification capabilities for partial adoption, and easy dismissal when suggestions prove unhelpful. These interaction patterns reinforce that AI serves as an assistant rather than autonomous actor, maintaining human judgment as the final arbiter of code quality and appropriateness.

Agency considerations extend to personalization and adaptation, where systems learn from user preferences without creating dependency or limiting exposure to diverse approaches. Striking this balance requires careful product design that enhances productivity without constraining creativity or reinforcing narrow coding patterns. Developers working with Docker on Linux value similar control over containerization choices, preferring tools that offer intelligent defaults while permitting deep customization when specific requirements demand alternative approaches.

Security Implications of AI-Generated Code

AI-generated code introduces novel security considerations beyond traditional software development risks, as models may suggest patterns containing vulnerabilities learned from flawed training examples. GitHub Copilot addresses this through security-focused filtering that screens suggestions against known vulnerability databases and suspicious patterns associated with common security flaws. However, this screening cannot guarantee absolute security, as novel vulnerability classes and context-specific risks may evade automated detection requiring human security expertise.

Organizations adopting AI-assisted development must update security review processes to account for these new risk vectors, training developers to scrutinize AI suggestions with appropriate skepticism. This represents a cultural shift from viewing AI suggestions as inherently trustworthy to treating them as potentially flawed contributions requiring validation. Security specialists pursuing CCIE data center mastery develop similar healthy skepticism toward automated configuration suggestions, recognizing that complex systems demand human judgment to identify subtle misconfigurations that automated tools might miss.

Model Versioning and Reproducibility Standards

Reproducibility in AI systems requires careful versioning not only of model weights but also of training data, hyperparameters, and infrastructure configurations that influence model behavior. The GH-300's architecture supports this through hardware-assisted versioning that cryptographically signs model checkpoints and maintains tamper-evident logs of training processes. These capabilities enable organizations to recreate specific model versions and verify that deployed models match approved configurations, addressing compliance requirements in regulated industries.

Versioning extends to GitHub Copilot through transparent model update processes that notify users when underlying models change, potentially affecting suggestion patterns. This transparency allows organizations to evaluate whether model updates align with their risk tolerance and usage policies before deployment. Network engineers studying CCIE evolution recognize parallel versioning challenges in network operating systems, where configuration reproducibility and change tracking prove essential for maintaining stable, secure infrastructure across distributed environments.

Privacy Preservation in Collaborative AI Systems

Privacy considerations in AI-assisted development involve protecting both the code developers write and the training data underlying model suggestions. GitHub Copilot implements privacy protections through local processing of proprietary code, ensuring sensitive intellectual property never leaves organizational boundaries during normal operation. The system separates model inference from telemetry collection, allowing organizations to benefit from AI assistance while maintaining confidentiality around their specific codebases and development practices.

These architectural choices reflect broader industry trends toward federated learning and edge inference that minimize data exposure while still enabling model improvement through aggregated insights. Privacy preservation often introduces performance tradeoffs, as local processing requires more powerful endpoint devices and limits the sophistication of models that can run effectively. Developers comparing CCNA and DevNet paths encounter similar privacy considerations in network automation, where sensitive configuration data must remain protected while still enabling intelligent automation and optimization capabilities.

Regulatory Compliance and Certification Frameworks

Emerging regulatory frameworks for AI systems impose requirements around transparency, fairness, accountability, and safety that affect both hardware platforms and applications like GitHub Copilot. The GH-300's design anticipates these requirements through built-in audit capabilities, explainability support, and safety monitoring that align with proposed regulations in major jurisdictions. This proactive approach reduces compliance burdens for organizations deploying AI systems, as hardware capabilities directly support regulatory obligations without requiring extensive custom development.

GitHub Copilot's compliance posture involves ongoing assessment against evolving standards, including European Union AI Act provisions, sector-specific regulations in healthcare and finance, and emerging frameworks in other jurisdictions. Microsoft's approach emphasizes documentation, risk assessment, and transparent limitation acknowledgment that demonstrate responsible development practices even where formal certification regimes remain under development. Cloud professionals preparing for Google Associate Cloud Engineer certification encounter similar compliance considerations across cloud platforms, recognizing that certification demonstrates competency in managing systems that meet regulatory and industry standards.

Stakeholder Engagement and Feedback Integration

Responsible AI development requires ongoing engagement with diverse stakeholders including users, affected communities, regulators, and domain experts who provide critical perspectives on system impact. Microsoft's approach to GitHub Copilot includes public discourse channels, academic partnerships, and user research programs that surface concerns and suggestions for improvement. This engagement informs product decisions around features like attribution, filtering policies, and transparency mechanisms that might not emerge from internal development processes alone.

Feedback integration challenges include distinguishing representative concerns from outlier perspectives, balancing competing stakeholder interests, and maintaining development velocity while incorporating diverse input. Product teams must develop systematic processes for evaluating feedback against product principles and technical constraints, making transparent decisions about which suggestions to implement and which to decline with clear rationale. Marketing professionals building Google Ads careers employ similar stakeholder management skills, gathering feedback from advertisers, users, and platform partners to guide feature development and policy refinement.

Performance Optimization Within Responsibility Constraints

Optimizing AI system performance while maintaining responsibility commitments requires careful engineering that avoids shortcut solutions that sacrifice safety or fairness for speed. The GH-300's architecture demonstrates this through specialized accelerators for responsibility-related computations that prevent them from becoming bottlenecks degrading overall system performance. This design philosophy rejects the false choice between responsible AI and high-performance AI, instead pursuing both objectives simultaneously through thoughtful technical solutions.

GitHub Copilot's performance optimization involves sophisticated caching, predictive prefetching, and progressive refinement that deliver responsive suggestions while maintaining filtering and safety checks. Engineers working on these systems continuously profile performance characteristics, identifying optimization opportunities that preserve protective mechanisms while reducing latency. Infrastructure specialists managing Azure server migration apply similar performance optimization principles, seeking efficiency gains that maintain security controls and compliance requirements rather than disabling safeguards to achieve marginal speed improvements.

Incident Response and Remediation Protocols

Even well-designed AI systems occasionally produce problematic outputs requiring rapid response and remediation. GitHub Copilot's incident response protocols include mechanisms for users to flag concerning suggestions, automated detection of unusual system behavior, and processes for investigating reported issues. These protocols distinguish between isolated incidents requiring localized fixes and systemic problems necessitating broader model updates or architectural changes.

Effective incident response requires clear escalation pathways, documented decision-making authority, and transparent communication with affected users about problems and remediation steps. Organizations must balance rapid response against thorough investigation, avoiding hasty changes that introduce new problems while moving quickly enough to prevent ongoing harm. Security teams implementing Microsoft Sentinel foundations develop similar incident response capabilities for security events, recognizing that detection and response speed often determines whether incidents remain contained or escalate into major breaches.

Cross-Platform Integration and Ecosystem Considerations

The GH-300's responsible AI capabilities extend beyond isolated deployments to support integrated ecosystems where multiple AI systems interact. This requires standardized interfaces for sharing audit data, coordinating governance policies, and maintaining consistent behavior across platforms. Microsoft's broader AI infrastructure strategy emphasizes these integration points, enabling organizations to implement unified governance frameworks spanning cloud services, edge deployments, and development tools like GitHub Copilot.

Ecosystem integration challenges include reconciling different responsibility frameworks, managing data flows across trust boundaries, and maintaining performance when multiple verification layers accumulate across system interfaces. Industry standards development in this area remains nascent, with organizations often creating proprietary integration patterns that may hinder interoperability. Cloud architects comparing AWS and Azure infrastructure navigate similar ecosystem challenges, evaluating how different platforms' security models, governance tools, and compliance certifications align with organizational requirements across heterogeneous technology portfolios.

Long-Term Sustainability of Responsible AI Investments

The GH-300's architecture embeds responsibility capabilities at the hardware level, reducing recurring costs associated with software-layer approaches that require constant maintenance and updates. This economic model makes responsible AI more sustainable by lowering marginal costs per deployment while maintaining protective capabilities that might otherwise face budget pressures during economic downturns. GitHub Copilot's business model similarly must support ongoing investment in safety research, model refinement, and governance infrastructure that may not directly generate revenue but prove essential for maintaining user trust and regulatory compliance.

Microsoft's approach treats these investments as fundamental product requirements rather than optional enhancements, recognizing that responsibility failures carry existential risks to product viability. Security professionals evaluating Azure versus AWS security consider similar long-term investment patterns, recognizing that platforms demonstrating sustained security commitment through consistent enhancement and rapid vulnerability response merit greater confidence than those treating security as periodic initiatives.

Cultural Transformation Through Tool Design

AI-assisted development tools shape developer culture and practices in ways extending far beyond immediate productivity impacts. GitHub Copilot's design choices around attribution, transparency, and user control model responsible AI principles that influence how developers think about appropriate technology use. This cultural influence multiplies as Copilot-trained developers carry these norms to other projects and organizations, creating ripple effects that extend the tool's impact on responsible AI adoption throughout the software industry.

Cultural transformation requires intentional design that makes responsible choices the default path while still permitting flexibility for legitimate edge cases. Product teams must resist pressure to prioritize convenience over responsibility, maintaining protective mechanisms even when users request their removal or relaxation. Collaboration specialists implementing Microsoft Teams best practices observe similar cultural dynamics, where tool design shapes organizational communication norms and collaboration patterns in ways that persist even after teams adopt different platforms.

Measuring Responsible AI Outcomes and Impact

Quantifying responsible AI implementation effectiveness requires metrics extending beyond traditional performance indicators like accuracy and throughput. Organizations deploying GH-300 hardware must develop measurement frameworks capturing fairness across demographic groups, transparency levels users actually utilize, and privacy protection efficacy under various attack scenarios. These metrics inform iterative improvement cycles, revealing which responsibility mechanisms deliver measurable value versus those consuming resources without corresponding benefits.

GitHub Copilot's impact measurement encompasses acceptance rates across different suggestion types, user satisfaction with transparency features, and incident rates related to security or licensing concerns. Microsoft tracks these metrics longitudinally to identify trends and validate that product changes improve responsible AI outcomes rather than inadvertently degrading them. Teams comparing Zoom versus Microsoft Teams employ similar comparative metrics, evaluating platforms against multidimensional criteria including accessibility, privacy, and inclusive design rather than focusing narrowly on technical features or pricing.

Attribution Systems and Intellectual Property Protection

Attribution in AI-generated content represents one of the most challenging responsible AI problems, requiring systems to identify when outputs closely resemble training data and provide appropriate credit. GitHub Copilot's attribution features analyze suggestion similarity to known code repositories, surfacing matches that exceed certain thresholds and directing users to original sources. This transparency allows developers to make informed decisions about whether suggested code requires explicit licensing consideration or attribution beyond standard open source compliance practices.

Technical implementation involves efficient similarity search across billions of code snippets, balancing comprehensiveness against latency requirements that affect user experience. The system must handle edge cases including refactored code that maintains functional similarity despite syntactic differences, and common patterns that appear across many repositories without clear original authorship. Data professionals examining Power BI salary foundations recognize similar attribution challenges in analytical work, where insights build on prior analyses and distinguishing original contribution from derivative work requires careful judgment about meaningful innovation versus incremental refinement.

Multimodal AI and Expanded Responsibility Surfaces

The GH-300's architecture supports multimodal AI systems that process combinations of text, code, images, and structured data, introducing new responsibility considerations beyond text-only models. Each modality carries distinct bias patterns, privacy risks, and security vulnerabilities requiring tailored protective mechanisms. Multimodal systems also introduce interaction effects where biases or errors compound across modalities, creating emergent problems that don't exist in unimodal implementations.

GitHub Copilot's evolution toward multimodal assistance that understands diagrams, documentation, and interface mockups alongside code expands its responsibility surface significantly. Visual content introduces copyright and appropriateness concerns distinct from code-focused filtering, while natural language processing of documentation raises questions about technical accuracy and potential misinformation. Organizations pursuing AppDynamics professional implementation encounter multimodal monitoring challenges, correlating metrics across application performance, user experience, and business outcomes requiring integrated analysis that maintains data quality and interpretability across diverse telemetry streams.

Federated Learning and Distributed Responsibility

Federated learning enables AI model improvement using distributed data that never centralizes, addressing privacy concerns while still benefiting from diverse training examples. The GH-300 includes specialized support for federated learning protocols, implementing secure aggregation and differential privacy mechanisms that protect individual contributions while enabling collective model enhancement. This approach particularly benefits organizations handling sensitive data subject to strict regulatory constraints preventing cloud-based training approaches.

GitHub Copilot could potentially leverage federated learning to improve suggestions based on proprietary organizational codebases without exposing sensitive intellectual property to Microsoft. This would require careful protocol design ensuring that aggregated model updates don't leak information about specific code patterns or architectural decisions organizations wish to keep confidential. Professionals studying business architecture analysis appreciate federated approaches that enable industry-wide insight aggregation without compromising competitive advantage through oversharing of strategic information.

Continuous Validation and Testing Regimes

Responsible AI systems require ongoing validation rather than one-time pre-deployment testing, as model behavior can drift due to data distribution changes, adversarial attacks, or unintended feedback loops. The GH-300's monitoring capabilities support continuous validation through real-time statistical testing that compares current model behavior against baseline expectations. Significant deviations trigger alerts enabling investigation before subtle degradation accumulates into obvious failures.

GitHub Copilot implements continuous validation through synthetic test cases that probe model behavior across diverse scenarios, canary deployments that limit exposure of new model versions, and automated rollback when acceptance metrics deteriorate beyond acceptable thresholds. These practices adapt software engineering discipline to AI systems, recognizing that models require similar rigor to traditional code despite their probabilistic nature. Teams business architecture practitioner skills apply analogous validation thinking to business process changes, testing new workflows with limited populations before broad rollout while maintaining rollback capabilities when innovations prove problematic.

Human-AI Collaboration Patterns and Interface Design

Effective responsible AI requires interface designs that facilitate appropriate human-AI collaboration rather than encouraging over-reliance or misuse. GitHub Copilot's interface deliberately positions AI suggestions as one input among many, maintaining visible alternatives including writing code manually, searching documentation, or consulting colleagues. This design prevents automation bias where users uncritically accept AI outputs simply because automation generated them.

Collaboration pattern design involves understanding cognitive biases affecting human judgment in AI-assisted contexts, including anchoring effects where initial AI suggestions disproportionately influence final decisions. Interface designers must balance making AI assistance readily available against preventing it from overwhelming alternative approaches that may prove superior in specific contexts. Specialists developing business architecture strategies encounter similar collaboration challenges, designing stakeholder engagement processes that leverage expert input without allowing dominant voices to suppress valuable minority perspectives.

Adversarial Robustness and Security Hardening

AI systems face unique security threats including adversarial examples crafted to cause misclassification, data poisoning attacks corrupting training sets, and model extraction attacks stealing intellectual property embedded in trained models. The GH-300 incorporates adversarial robustness features including input validation circuits that detect statistical anomalies characteristic of adversarial examples and secure enclaves protecting model weights from unauthorized extraction.

GitHub Copilot's security posture addresses these threats through input sanitization that filters unusual prompts potentially designed to elicit problematic suggestions, rate limiting preventing systematic model probing, and access controls restricting who can submit training data or observe detailed model behavior. Security hardening represents ongoing work as attackers develop novel techniques requiring defensive adaptation. Professionals building customer success manager capabilities develop analogous adversarial thinking, anticipating how malicious actors might abuse platform features and designing preventive controls before exploitation occurs.

Explainability Techniques and Interpretability Research

Explainability in AI systems ranges from local explanations for individual predictions to global interpretability understanding overall model behavior patterns. The GH-300 supports various explainability techniques through specialized circuits accelerating attention visualization, gradient computation for saliency mapping, and counterfactual generation identifying minimal input changes that alter outputs. These capabilities reduce the computational overhead traditionally associated with explainability, making it more practical for production deployment.

GitHub Copilot's explainability features include highlighting which portions of user context most influenced suggestions, showing alternative suggestions the model considered, and providing confidence indicators that help users calibrate trust appropriately. Research continues into improving explanation quality, as current techniques often sacrifice accuracy for comprehensibility or provide technically correct but practically unhelpful explanations. Analysts preparing for Alibaba Cloud certification study similar interpretability challenges in complex distributed systems, learning to extract meaningful insights from vast telemetry data without oversimplifying to the point of misrepresenting system behavior.

Lifecycle Management From Development Through Retirement

Responsible AI extends across entire system lifecycles including development, deployment, operation, and eventual retirement. The GH-300's architecture supports this through versioning capabilities that maintain historical model records, migration tools that facilitate updating deployed systems, and secure deletion mechanisms ensuring retired models cannot be reconstructed from residual data. Lifecycle management prevents situations where organizations lose track of deployed AI systems or fail to retire models when they become obsolete or problematic.

GitHub Copilot's lifecycle includes transparent communication about model updates, migration assistance helping organizations transition to new versions, and deprecation notices providing adequate time for adjustment before older model versions become unavailable. This professional lifecycle management mirrors practices in traditional software while adapting to AI's unique characteristics including the difficulty of precisely specifying model behavior and the potential for subtle degradation over time. Obtaining Alibaba Cloud ACS certification teaches similar lifecycle management for cloud infrastructure, planning not only initial deployment but ongoing maintenance, capacity adjustment, and eventual decommissioning.

Sector-Specific Responsibility Adaptations

Different industries face distinct responsible AI requirements reflecting varying regulatory frameworks, risk profiles, and stakeholder expectations. Healthcare AI demands rigorous safety validation and clinical efficacy demonstration, financial services prioritize fairness and discrimination prevention, while education emphasizes transparency and pedagogical appropriateness. The GH-300's flexible architecture allows organizations to configure responsibility mechanisms matching sector-specific requirements without extensive custom development.

GitHub Copilot's sector adaptations include specialized filtering for regulated industries like healthcare where suggested code must meet stringent safety standards, and enhanced attribution features for academic contexts where plagiarism concerns demand clear source identification. Microsoft develops these adaptations through partnerships with industry experts who provide domain knowledge that technical teams might lack. Specialists Alibaba Cloud security certification recognize similar sector-specific security requirements, learning how financial services, healthcare, and government sectors each impose distinct compliance obligations beyond generic security best practices.

International Perspectives and Cultural Considerations

Responsible AI principles manifest differently across cultural contexts reflecting varying values around privacy, autonomy, transparency, and fairness. Western frameworks often emphasize individual rights and consent, while other traditions prioritize collective welfare and social harmony. The GH-300's configurable governance frameworks accommodate these diverse perspectives, allowing organizations to implement responsibility mechanisms aligned with local norms and regulations rather than imposing universal standards that may fit some contexts poorly.

GitHub Copilot's international deployment involves adapting content filtering to regional sensitivities, supporting multiple languages with culturally appropriate interaction patterns, and respecting jurisdictional differences in intellectual property norms and developer rights. This cultural responsiveness requires ongoing dialogue with diverse user communities and willingness to implement region-specific variations even when this increases product complexity. Professionals studying Alibaba Cloud infrastructure encounter similar internationalization challenges, designing systems that comply with data sovereignty requirements while maintaining operational consistency across global deployments.

Economic Models Supporting Responsible AI Investment

Sustaining responsible AI requires business models that fund ongoing investment in safety research, ethical review, and protective mechanism maintenance. The GH-300's pricing includes premiums for responsibility features, creating revenue streams supporting their continued development and improvement. This explicit cost allocation makes responsibility economically sustainable rather than treating it as overhead competing with profit-maximizing features for limited budgets.

GitHub Copilot's subscription pricing must balance affordability promoting widespread adoption against generating sufficient revenue for responsibility investments that may not directly enhance user-visible functionality. Microsoft's approach treats responsible AI as product differentiation attracting organizations that view AI governance as essential rather than optional. Professionals earning Alibaba Cloud professional certification understand similar economic considerations in cloud platforms, recognizing that premium features like advanced security or compliance tools justify higher pricing for organizations valuing these capabilities.

Open Source Contribution and Community Collaboration

Open source development offers unique opportunities and challenges for responsible AI implementation. GitHub Copilot's relationship with open source includes contributing to transparency tools, supporting research initiatives examining AI-assisted development impacts, and participating in standards development that could enable interoperability across AI coding assistants. This engagement balances commercial interests against community benefit, recognizing that healthy open source ecosystems ultimately serve all participants including commercial providers.

The GH-300 reference implementations and documentation include open source components enabling academic research and independent verification of responsibility claims. This transparency builds trust while accelerating innovation as researchers identify improvements and alternative approaches. Organizations supporting open source often find that contributions enhance their reputation and attract talent valuing collaborative development cultures. Teams preparing for professional cloud manager credentials study similar open source dynamics in cloud-native technologies, understanding how projects like Kubernetes succeed through vendor collaboration and community governance balancing commercial and collective interests.

Edge Deployment and Resource-Constrained Environments

Deploying responsible AI in edge environments with limited computational resources requires efficiency optimizations that maintain protective mechanisms despite constraints. The GH-300 architecture supports edge deployment through power-efficient responsibility circuits and model compression techniques preserving safety properties while reducing memory footprints. These capabilities enable responsible AI in mobile devices, embedded systems, and remote installations where connectivity or power limitations preclude cloud-dependent approaches.

GitHub Copilot's edge considerations include offline operation modes that maintain basic functionality when network access proves unreliable, and progressive enhancement that adds sophisticated features when resources permit while ensuring core capabilities remain available universally. Edge deployment often involves accepting graceful degradation where full responsibility mechanisms may be unavailable, requiring clear communication about capability limitations in resource-constrained contexts. AWS advanced networking specialty design similar edge architectures balancing local processing against cloud connectivity, optimizing data flows and computation placement for scenarios spanning fully connected to intermittently offline operations.

Skills Development and Workforce Training

Responsible AI implementation requires workforce capabilities extending beyond traditional software engineering or data science expertise. Organizations must develop internal capacity for ethical AI review, bias testing, transparency mechanism design, and incident response specific to AI systems. The GH-300's educational materials include training modules on responsible AI operations, helping infrastructure teams understand their role in maintaining protective mechanisms and recognizing concerning patterns warranting escalation.

GitHub Copilot's impact on developer skill development includes both positive aspects like accelerating learning through exposure to diverse code patterns and concerns about potential deskilling if developers rely too heavily on AI assistance without developing independent problem-solving capabilities. Responsible deployment involves guidance on appropriate use balancing productivity benefits against skill development imperatives. AWS AI practitioner training address similar workforce development challenges, creating curricula that build practical AI implementation skills while instilling responsible development practices and ethical awareness.

Legal Frameworks and Liability Considerations

Emerging legal frameworks around AI systems raise questions about liability when AI-assisted tools produce problematic outputs. GitHub Copilot's terms of service address these concerns through provisions clarifying responsibility allocation between Microsoft, organizations deploying the tool, and individual developers using it. These legal instruments reflect ongoing uncertainty about appropriate liability models for AI systems that operate with partial autonomy but under human oversight. The GH-300's audit capabilities support liability management by providing evidence of reasonable care in AI deployment, potentially serving as defense against negligence claims.

Documentation showing systematic monitoring, incident response, and continuous improvement demonstrates organizational commitment to responsible AI beyond minimal compliance. Legal departments increasingly engage with AI deployment decisions, requiring technical teams to maintain comprehensive records supporting potential future litigation or regulatory investigation. This documentation discipline mirrors practices in regulated industries where compliance demonstration requires extensive audit trails and procedural evidence.

Algorithmic Accountability and Contestability Mechanisms

Algorithmic accountability requires mechanisms allowing affected individuals to understand, question, and contest AI decisions impacting them. While GitHub Copilot's suggestions don't constitute high-stakes decisions like loan approvals or medical diagnoses, accountability principles still apply through transparency about how suggestions are generated and channels for users to report problems. The system's feedback mechanisms allow developers to flag inappropriate suggestions, contributing to continuous improvement while providing individual recourse when AI assistance proves unhelpful or harmful.

The GH-300's architecture supports accountability through comprehensive logging that enables retrospective analysis of AI decision chains, identifying which inputs, model versions, and parameters produced specific outputs. This auditability proves essential when organizations must explain AI behavior to regulators, customers, or internal stakeholders questioning specific outcomes. Professionals preparing for AWS cloud practitioner certification learn similar accountability concepts in cloud infrastructure, understanding how audit trails, access logs, and configuration management enable demonstrating compliance with security policies and regulatory requirements.

Model Cards and Documentation Standards

Model cards provide standardized documentation describing AI system capabilities, limitations, intended use cases, and evaluation results across relevant metrics including fairness and accuracy. GitHub Copilot's model card details training data composition, known limitations like weaker performance in less common programming languages, and guidance on appropriate use contexts. This documentation helps organizations make informed decisions about whether Copilot aligns with their specific requirements and risk tolerance. The GH-300's documentation includes performance benchmarks across responsibility metrics, energy consumption profiles, and configuration guidance for various deployment scenarios.

Standardized documentation facilitates comparison across hardware platforms and informs procurement decisions balancing performance, cost, and responsibility capabilities. As model card practices mature, expectations around documentation comprehensiveness and accessibility continue rising, requiring ongoing investment in documentation quality. Engineers pursuing AWS CloudOps certification appreciate thorough documentation enabling effective system operation, recognizing that excellent documentation often distinguishes professionally managed platforms from poorly documented alternatives.

Inclusive Design and Accessibility Considerations

Inclusive AI design ensures systems serve diverse users including those with disabilities, limited technical expertise, or non-traditional backgrounds. GitHub Copilot's interface implements accessibility features including keyboard navigation, screen reader compatibility, and customizable suggestion presentation formats accommodating different cognitive styles and preferences. These features reflect recognition that developer diversity benefits software quality and innovation, making tools accessible to broad audiences advances both equity and industry interests.

The GH-300's inclusive design considerations include support for assistive technologies, compatibility with accessibility-focused development environments, and documentation in multiple formats accommodating different learning styles. Inclusive design often reveals broader usability improvements benefiting all users, not just those with specific accessibility needs. Organizations prioritizing inclusion frequently discover that diverse teams identify problems and opportunities that homogeneous groups miss. Specialists studying AWS data engineering encounter similar inclusive design principles in data pipelines, ensuring systems accommodate various data formats, quality levels, and integration patterns rather than assuming idealized inputs.

Environmental Impact Beyond Energy Efficiency

Comprehensive environmental responsibility extends beyond operational energy consumption to encompass manufacturing impacts, e-waste generation, and lifecycle resource utilization. The GH-300's environmental profile includes assessments of rare earth mineral usage, manufacturing carbon footprint, and recyclability provisions facilitating responsible end-of-life disposal. These considerations reflect growing recognition that technology's environmental impact requires holistic evaluation spanning extraction, production, operation, and disposal phases.

GitHub Copilot's environmental footprint involves both direct computational costs of inference and indirect impacts from increased code generation potentially enabling more software development with associated downstream effects. Measuring these impacts requires sophisticated lifecycle assessment accounting for avoided emissions from developer productivity gains and potential rebound effects where efficiency improvements enable increased total consumption. Organizations with serious sustainability commitments increasingly demand environmental transparency from technology vendors. Professionals obtaining AWS developer associate credentials learn to optimize application resource consumption, recognizing that efficient code reduces environmental impact while improving user experience and controlling operational costs.

Participatory Design and Stakeholder Inclusion

Participatory design involves stakeholders beyond development teams in shaping AI systems, particularly including communities likely to be affected by deployment. GitHub Copilot's development has included outreach to open source communities, academic researchers, and developer advocacy groups providing input on features, policies, and concerns. This engagement produces insights that internal teams might miss and builds trust through demonstrated willingness to incorporate external perspectives. The GH-300's design process similarly involved consultation with enterprise customers, infrastructure operators, and civil society organizations interested in AI governance.

Participatory approaches introduce challenges including managing diverse and sometimes conflicting input, protecting intellectual property while sharing sufficient information for meaningful feedback, and avoiding tokenistic consultation that solicits input without genuine commitment to incorporation. Effective participation requires resources and timelines accommodating iterative refinement based on stakeholder dialogue. Teams pursuing AWS DevOps professional certification apply similar collaborative approaches to infrastructure design, engaging application teams, security specialists, and business stakeholders to ensure solutions meet diverse requirements.

Testing Methodologies for Responsible AI Properties

Testing responsible AI properties requires methodologies beyond traditional software testing, as properties like fairness lack precise specifications and exhibit complex dependencies on data distributions and deployment contexts. GitHub Copilot's testing includes adversarial testing attempting to elicit problematic suggestions, fairness testing across diverse programming languages and frameworks, and longitudinal monitoring detecting degradation over time. These testing approaches complement traditional functional testing focused on correctness and performance. The GH-300's validation suite tests responsibility mechanisms across diverse workloads, stress conditions, and failure modes ensuring they function reliably under varied circumstances.

Testing challenges include defining acceptance criteria for subjective properties like transparency adequacy, generating representative test datasets covering relevant demographic groups and use cases, and automating tests that might traditionally require human judgment. As testing practices mature, industry convergence around standard test suites and benchmarks would facilitate comparison across systems and validation of vendor claims. Specialists in AWS machine learning develop similar specialized testing expertise, validating models across accuracy metrics, fairness criteria, and robustness under distribution shift.

Intellectual Diversity in AI Development Teams

Team composition influences AI system design through the perspectives, priorities, and blind spots team members bring. Diverse development teams spanning different demographic backgrounds, disciplinary training, and life experiences often produce more robust, inclusive systems than homogeneous teams. GitHub Copilot's development involves contributors with backgrounds in software engineering, machine learning, ethics, law, and human-computer interaction, bringing multifaceted perspectives to design decisions. Organizations developing responsible AI increasingly recognize that diversity represents not just ethical imperative but practical necessity for identifying issues before deployment.

This recognition drives recruitment and retention initiatives aimed at building diverse teams, though progress often remains slow due to pipeline limitations and industry culture challenges. Creating environments where diverse team members feel empowered to raise concerns and challenge assumptions requires intentional culture building beyond simply hiring diverse candidates. Professionals studying AWS machine learning engineering benefit from diverse educational experiences including ethics, social science, and domain expertise beyond pure technical skills.

Red Teaming and Adversarial Testing Practices

Red teaming involves dedicated teams attempting to break AI systems, elicit harmful outputs, or circumvent protective mechanisms through creative adversarial approaches. GitHub Copilot's red team exercises include attempts to generate malicious code, extract training data, and manipulate suggestions through carefully crafted prompts. These exercises reveal vulnerabilities that development teams focused on functionality might miss, enabling proactive remediation before malicious actors exploit weaknesses. The GH-300's red teaming includes both automated adversarial testing and human red teamers bringing creativity and intuition to identifying unexpected failure modes.

Effective red teaming requires independence from development teams to avoid groupthink, diverse skills spanning security, social engineering, and domain expertise, and psychologically safe environments where finding problems is celebrated rather than criticized. Organizations mature in their security practices institutionalize red teaming as a standard development phase rather than optional validation exercise. Security professionals obtaining AWS security specialty certification develop similar adversarial thinking, learning to anticipate attack patterns and design defensive mechanisms addressing threats before exploitation.

Vendor Transparency and Procurement Due Diligence

Organizations procuring AI systems require transparency from vendors about capabilities, limitations, training data, and responsibility mechanisms to make informed decisions. The GH-300's technical documentation provides detailed specifications enabling enterprise evaluation of whether capabilities match requirements and responsibility features align with organizational policies. This transparency supports procurement processes where responsible AI considerations receive equivalent weight to traditional criteria like performance and cost. GitHub Copilot's transparency around licensing implications, data handling, and update policies helps organizations assess fit with their governance frameworks and risk appetites.

Vendor transparency challenges include protecting intellectual property while providing sufficient detail for meaningful evaluation, and standardizing disclosures enabling comparison across alternatives. As procurement practices mature around AI systems, expect increasing sophistication in vendor evaluation criteria and due diligence processes. Architects preparing for AWS solutions architect associate certification develop vendor evaluation skills, learning to assess cloud services against technical requirements, compliance needs, and total cost of ownership.

Insurance and Risk Transfer Mechanisms

AI systems' novel risk profiles have prompted development of specialized insurance products covering AI-related liabilities including erroneous decisions, privacy breaches, and intellectual property infringement. Organizations deploying GitHub Copilot may seek insurance addressing code suggestion risks, though insurance market maturity around AI-assisted development remains limited. Insurance availability and pricing provide market signals about risk perceptions, potentially incentivizing responsible AI practices through premium adjustments.

The GH-300's deployment in risk-sensitive applications may similarly drive insurance consideration as organizations seek to transfer liability beyond what vendor warranties cover. Insurance development requires actuarial data about AI incident frequencies and severities that remains sparse given limited deployment history. As markets mature and loss data accumulates, expect more sophisticated insurance products with underwriting processes evaluating organizational AI governance practices. Professionals pursuing AWS solutions architect professional credentials understand risk management holistically, including insurance as one element alongside technical controls, processes, and contingency planning.

Behavioral Economics and Choice Architecture

AI system design involves choice architecture decisions influencing user behavior through default settings, suggestion ordering, and friction introduction or removal. GitHub Copilot's choice architecture includes defaults requiring explicit acceptance of suggestions rather than automatic application, nudging users toward deliberate consideration rather than reflexive acceptance. These design choices reflect behavioral economics insights about how presentation affects decision quality. Understanding cognitive biases helps designers create interfaces that promote responsible use without being paternalistic or overly restrictive.

The GH-300's configuration interfaces similarly employ choice architecture principles, making secure, efficient configurations the default path while permitting customization when organizations have specific requirements. Choice architecture requires balancing designer values against user autonomy, avoiding manipulation while still guiding users toward beneficial choices. Organizations building ISA security expertise apply similar behavioral insights to security awareness training, recognizing that human behavior often determines security outcomes more than technical controls.

Cross-Sector Learning and Best Practice Sharing

Responsible AI challenges manifest across sectors with each domain developing practices and insights valuable to others. Healthcare AI's rigorous safety validation approaches inform other high-stakes applications, financial services' fairness testing methodologies apply broadly, and education's transparency standards offer models for other domains. The GH-300's ecosystem benefits from this cross-sector learning through industry forums, standards development, and academic research synthesizing insights across domains. GitHub Copilot's learnings about developer-AI interaction inform broader human-AI collaboration patterns applicable beyond coding assistance.

Microsoft's engagement in responsible AI consortia and standards bodies facilitates knowledge sharing while advancing industry-wide capability maturation. Cross-sector learning requires translating domain-specific language and contexts, adapting practices to different regulatory environments, and overcoming competitive sensitivities around sharing potentially advantageous approaches. Professionals obtaining ISACA certifications engage with cross-sector governance frameworks, learning how audit, risk management, and control principles apply across diverse industries.

Long-Term Research Agenda and Unsolved Problems

Despite rapid progress in responsible AI, fundamental research questions remain unsolved including reliable fairness metrics applicable across diverse contexts, scalable transparency mechanisms for complex models, and robust verification of safety properties. The GH-300's development roadmap includes support for emerging techniques as research matures, while GitHub Copilot's evolution incorporates new findings from academic and industry research addressing current limitations. Unsolved problems include detecting and mitigating subtle biases that evade current testing approaches, providing meaningful explanations for deep learning models without sacrificing accuracy, and predicting emergent behaviors in complex AI systems.

Sustained research requires funding from diverse sources including government, industry, and philanthropy to maintain independence and breadth of investigation. Organizations should maintain realistic expectations about current capabilities while following research developments that may enable future improvements. This balanced perspective avoids both dismissing legitimate concerns as unsolvable and delaying deployments waiting for perfect solutions. Architects earning AWS solutions architect credentials similarly balance current best practices against emerging techniques, implementing proven approaches while monitoring innovations that may offer future advantages.

Organizational Change Management for AI Adoption

Successful responsible AI implementation requires organizational change beyond technology deployment, including updated policies, new governance structures, and cultural adaptation valuing ethical considerations alongside efficiency. The GH-300's introduction into organizations often catalyzes broader AI governance conversations, prompting policy development, role clarification, and process changes spanning multiple departments. Change management challenges include overcoming resistance from stakeholders comfortable with existing approaches and ensuring that formal policies translate into actual practice.

GitHub Copilot adoption similarly requires organizations to address questions about code ownership, quality assurance processes, and developer training that extend beyond simply providing tool access. Effective change management involves stakeholder engagement, clear communication about rationale and benefits, and realistic timelines allowing adaptation rather than forcing rushed transformation. Organizations should expect iterative refinement as initial policies prove inadequate or overly restrictive when confronted with operational realities. Teams preparing for AWS professional architect certification develop change management awareness, recognizing that technology transformation requires addressing organizational, process, and cultural dimensions alongside technical implementation.

Metrics, Monitoring, and Continuous Improvement Cycles

Effective responsible AI requires continuous monitoring against defined metrics with feedback loops enabling iterative improvement. The GH-300's telemetry systems provide real-time dashboards tracking responsibility metrics including bias detection rates, energy efficiency, and audit log completeness. These metrics inform operational decisions about system tuning, capacity planning, and when interventions prove necessary to maintain acceptable behavior. GitHub Copilot's monitoring encompasses user satisfaction, suggestion acceptance rates disaggregated by various demographic and contextual factors, and incident reports requiring investigation.

Continuous improvement cycles analyze these metrics to identify degradation trends, validate that updates achieve intended effects, and prioritize future development addressing most impactful gaps. Establishing appropriate metrics requires balancing comprehensiveness against cognitive load, selecting indicators that meaningfully reflect responsibility objectives without overwhelming operators with excessive data. Professionals obtaining AWS DevOps professional expertise implement similar monitoring and continuous improvement for infrastructure, establishing telemetry, alerting, and automated remediation enabling proactive issue resolution.

The Path Forward for Responsible AI at Scale

Scaling responsible AI from isolated deployments to widespread adoption requires addressing technical, organizational, and societal challenges that current approaches only partially solve. The GH-300 and GitHub Copilot represent significant advances demonstrating that responsibility and performance can coexist, though much work remains to generalize these successes across diverse AI applications and contexts. Progress requires sustained commitment from technology providers, thoughtful adoption by organizations, informed oversight by regulators, and ongoing engagement from civil society ensuring that AI development serves broad societal interests.

Future responsible AI development will likely see greater standardization enabling interoperability, mature insurance markets transferring certain risks, evolved legal frameworks clarifying liability and requirements, and professional certification programs establishing baseline competency for AI practitioners. These institutional developments will complement technical advances, creating ecosystems supporting responsible AI through multiple reinforcing mechanisms. Organizations embarking on AI adoption should view responsibility as strategic investment rather than a compliance burden, recognizing that trust, sustainability, and resilience ultimately determine long-term success in AI-enabled transformation.

Conclusion:

Microsoft GH-300 strategies for responsible AI and GitHub Copilot reveals a comprehensive approach to embedding ethical considerations throughout the AI technology stack. From silicon-level bias detection circuits to application-layer transparency mechanisms, these systems demonstrate that responsible AI requires coordinated intervention across hardware architecture, software design, operational practices, and organizational governance. The GH-300's specialized circuits for audit logging, energy efficiency, and behavioral monitoring provide foundational capabilities that applications like GitHub Copilot leverage to implement user-facing responsibility features including content filtering, attribution systems, and transparency interfaces.

This integrated approach challenges false dichotomies between performance and responsibility, showing that thoughtful engineering can advance both objectives simultaneously rather than treating them as competing priorities. The GH-300's architecture achieves this through specialized accelerators that perform responsibility computations in parallel with primary workloads, eliminating the performance penalties that might otherwise discourage rigorous safety checks. GitHub Copilot similarly demonstrates that developer productivity and responsible code generation can coexist through careful system design that makes safe, ethical behavior the default path while maintaining flexibility for legitimate edge cases. These examples establish templates for responsible AI implementation across domains, illustrating principles applicable beyond their specific contexts.

Looking forward, the path toward broadly adopted responsible AI depends on continued innovation addressing unsolved technical challenges, institutional development creating supporting ecosystems, and cultural evolution valuing ethical considerations alongside efficiency and capability. Organizations deploying AI systems must recognize that responsibility represents ongoing commitment rather than one-time achievement, requiring sustained investment in monitoring, testing, and improvement as capabilities evolve and societal expectations shift. The GH-300 and GitHub Copilot's example shows that leading technology providers increasingly embed responsibility into their core value propositions, treating it as competitive differentiator rather than a regulatory burden. This commercial recognition that responsibility drives long-term success provides grounds for optimism that responsible AI principles will continue maturing and spreading throughout the technology industry.

The synthesis of hardware capabilities, software implementation, and organizational practice illustrated across demonstrates that responsible AI requires multifaceted approaches addressing technical, policy, and cultural dimensions simultaneously. No single intervention suffices; rather, effective responsibility emerges from reinforcing mechanisms spanning the entire AI lifecycle from initial design through deployment, operation, and eventual retirement. The GH-300's silicon-level safeguards complement GitHub Copilot's algorithmic filtering, which in turn supports organizational governance frameworks that define acceptable AI use within specific contexts. This defense-in-depth approach recognizes that responsibility mechanisms may fail individually, requiring redundant protections ensuring that single-point failures don't compromise overall system integrity and trustworthiness.