Exam Code: Terraform Associate 003

Exam Name: HashiCorp Certified: Terraform Associate (003)

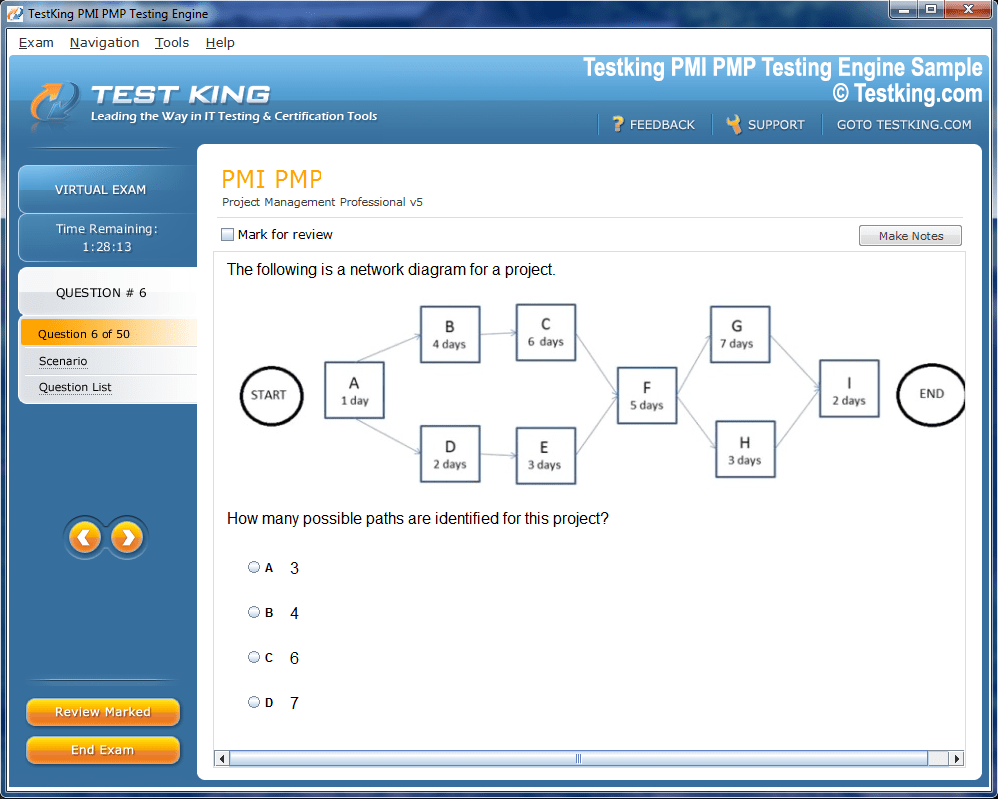

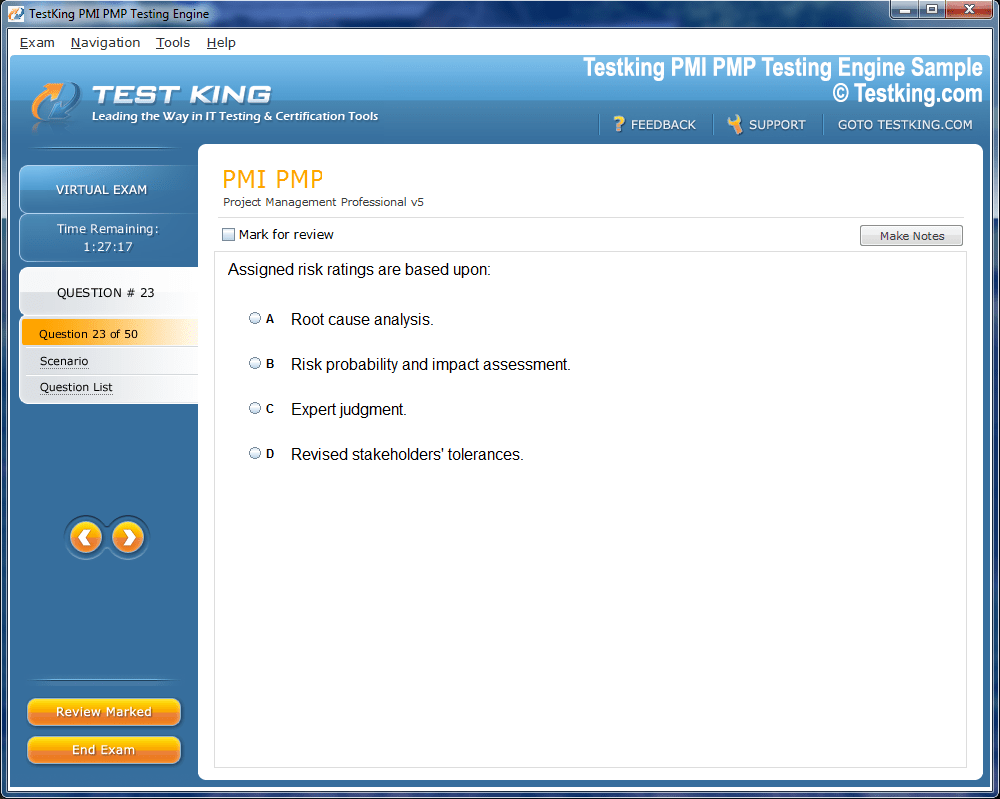

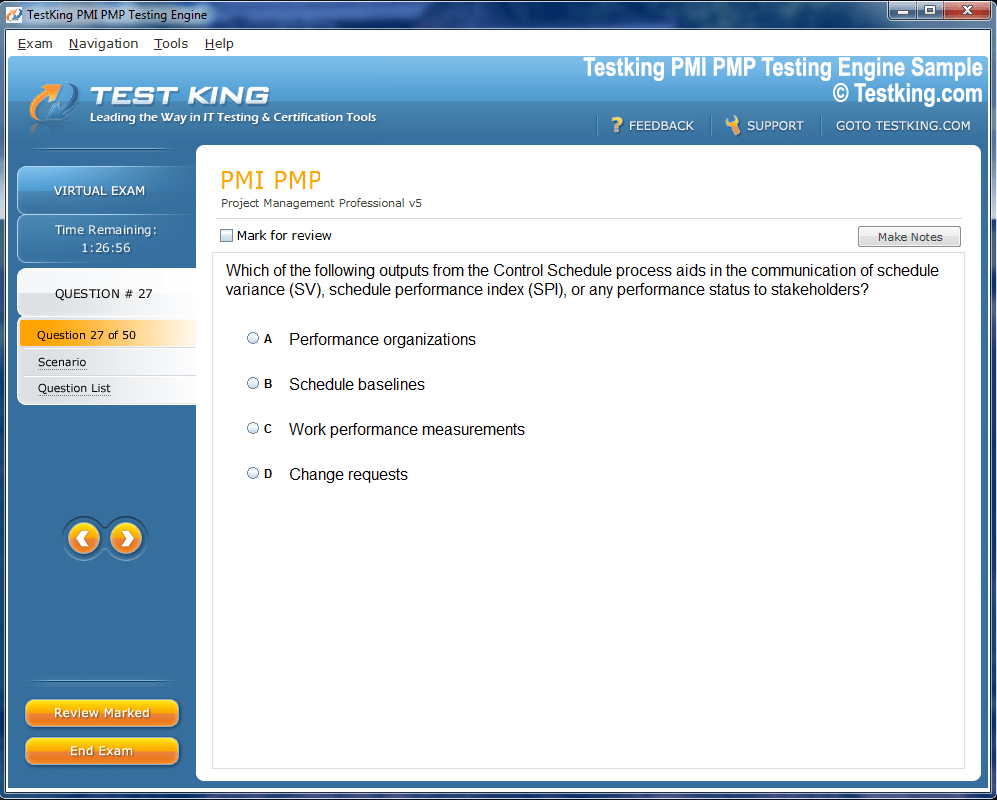

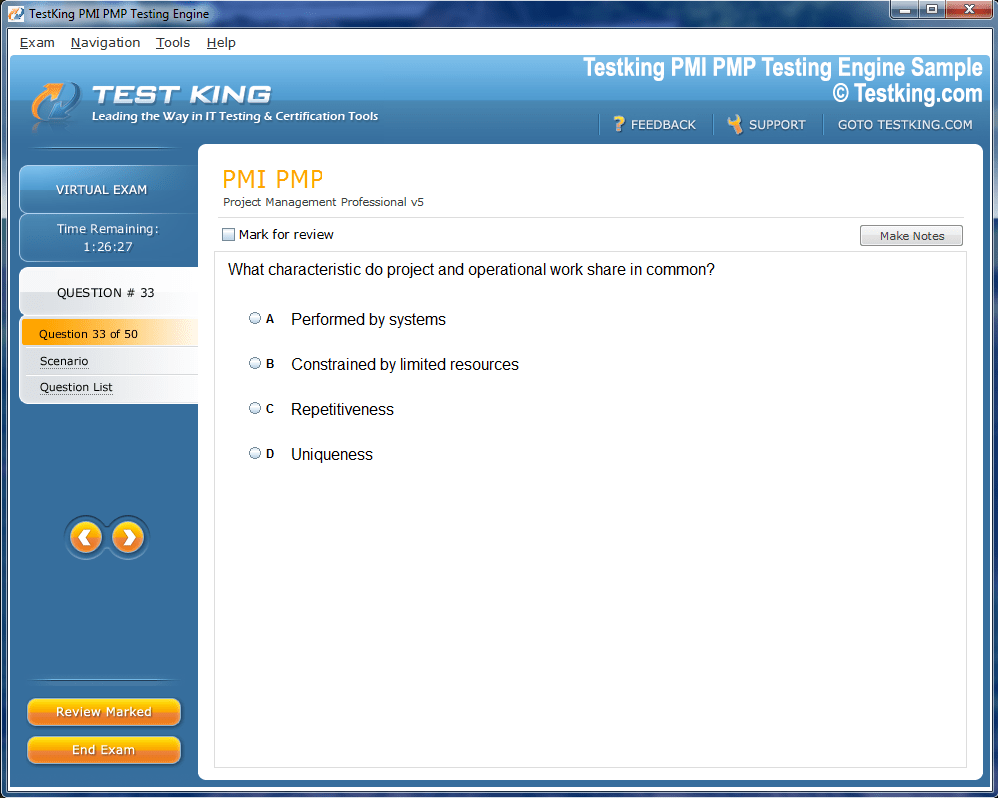

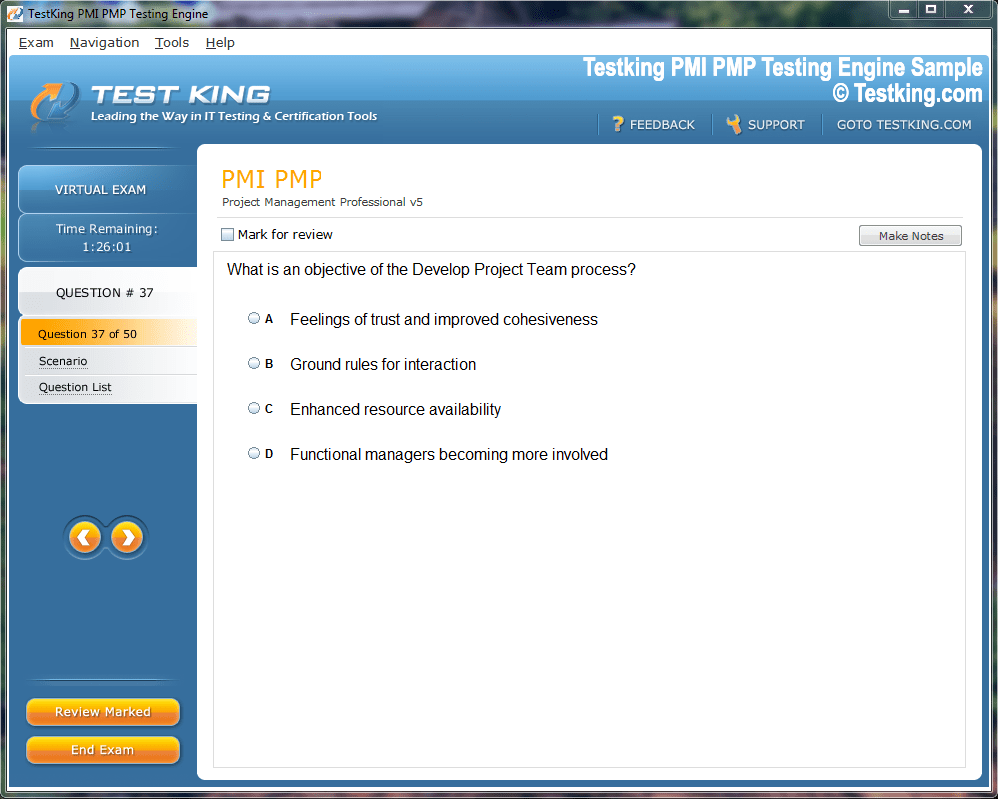

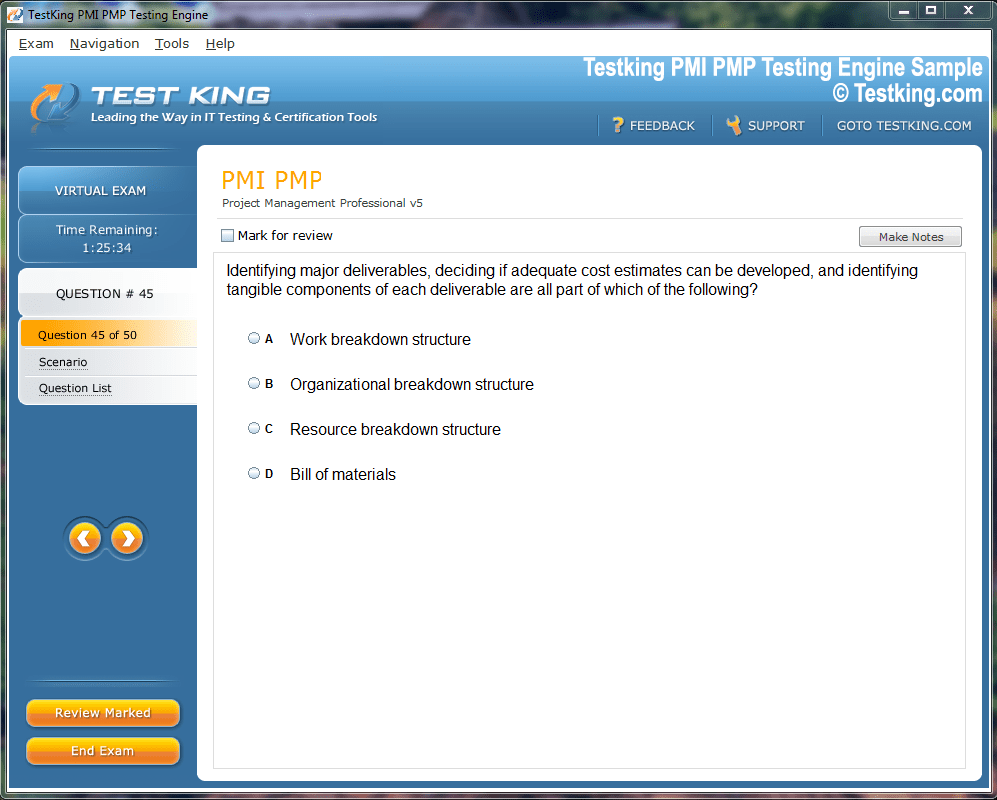

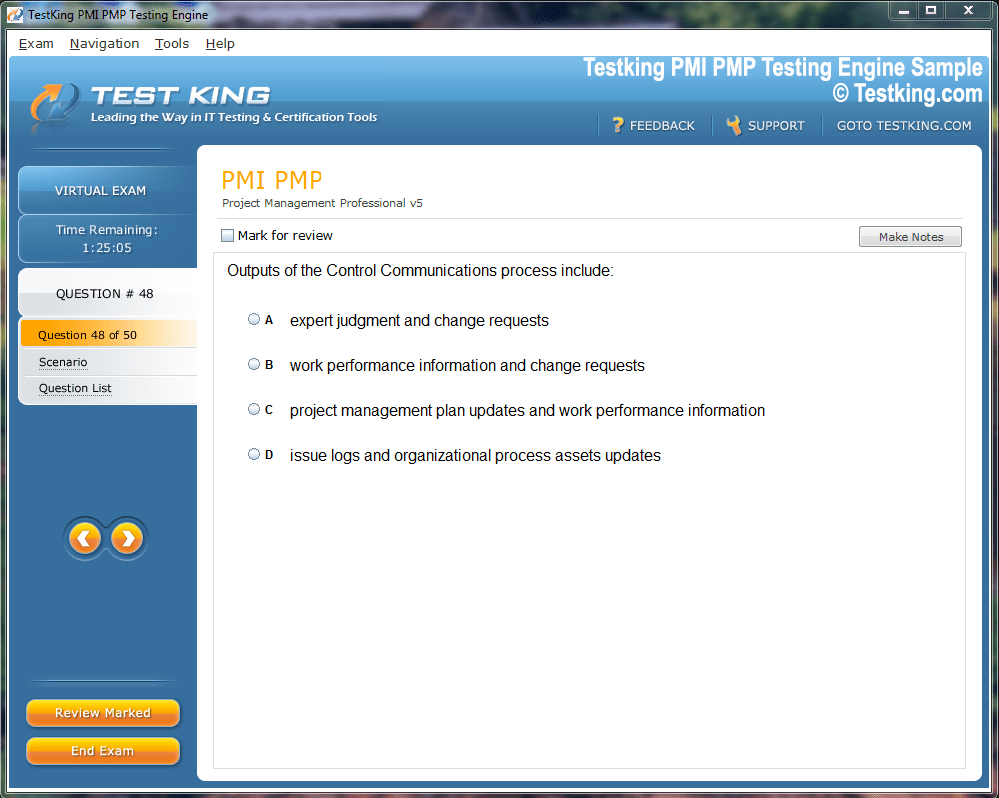

Product Screenshots

Frequently Asked Questions

Where can I download my products after I have completed the purchase?

Your products are available immediately after you have made the payment. You can download them from your Member's Area. Right after your purchase has been confirmed, the website will transfer you to Member's Area. All you will have to do is login and download the products you have purchased to your computer.

How long will my product be valid?

All Testking products are valid for 90 days from the date of purchase. These 90 days also cover updates that may come in during this time. This includes new questions, updates and changes by our editing team and more. These updates will be automatically downloaded to computer to make sure that you get the most updated version of your exam preparation materials.

How can I renew my products after the expiry date? Or do I need to purchase it again?

When your product expires after the 90 days, you don't need to purchase it again. Instead, you should head to your Member's Area, where there is an option of renewing your products with a 30% discount.

Please keep in mind that you need to renew your product to continue using it after the expiry date.

How many computers I can download Testking software on?

You can download your Testking products on the maximum number of 2 (two) computers/devices. To use the software on more than 2 machines, you need to purchase an additional subscription which can be easily done on the website. Please email support@testking.com if you need to use more than 5 (five) computers.

What operating systems are supported by your Testing Engine software?

Our Terraform Associate 003 testing engine is supported by all modern Windows editions, Android and iPhone/iPad versions. Mac and IOS versions of the software are now being developed. Please stay tuned for updates if you're interested in Mac and IOS versions of Testking software.

Top HashiCorp Exams

Building Expertise with HashiCorp Terraform Associate 003 for Modern Infrastructure

Infrastructure as Code represents a fundamental shift in how organizations provision and manage their technology resources. Rather than manually configuring servers and networks through point-and-click interfaces, teams now define their entire infrastructure in declarative configuration files. This approach brings software development practices to infrastructure management, enabling version control, peer review, and automated testing of infrastructure changes before they reach production environments.

Terraform has emerged as the leading multi-cloud provisioning tool, allowing practitioners to manage resources across AWS, Azure, Google Cloud, and hundreds of other providers through a single workflow. The HashiCorp Terraform Associate 003 certification validates foundational knowledge of Terraform's core concepts, workflow, and best practices. Professionals pursuing this credential demonstrate their ability to write, plan, and apply infrastructure code that scales across diverse certification paths while maintaining consistency and reliability in deployment processes.

The Evolution of Infrastructure Management Paradigms

Traditional infrastructure management relied heavily on manual processes, where system administrators would configure each server individually through console access or remote desktop sessions. This approach worked adequately for small environments but quickly became unsustainable as infrastructure complexity grew. Configuration drift, where servers diverged from their intended state over time, created significant operational challenges and security vulnerabilities that plagued IT departments.

The rise of virtualization and cloud computing accelerated the need for programmatic infrastructure management. Organizations found themselves provisioning and deprovisioning resources at unprecedented speeds, making manual approaches entirely impractical. HashiCorp recognized this gap and designed Terraform to treat infrastructure with the same rigor that developers apply to application code, enabling teams to distinguish platform approaches and select the most appropriate tools for their specific use cases while maintaining consistency across environments.

Core Components of the Terraform Workflow

The Terraform workflow consists of four primary stages that practitioners must master for the Associate 003 certification. The write phase involves authoring configuration files in HashiCorp Configuration Language, defining the desired state of infrastructure resources. These files specify everything from virtual machine specifications to network configurations, database instances, and DNS records in a human-readable format that facilitates collaboration and review.

The init command initializes a working directory containing Terraform configuration files, downloading necessary provider plugins and preparing the backend for state management. During the plan phase, Terraform creates an execution plan that shows exactly what changes will occur when the configuration is applied. This preview capability allows teams to verify intended changes before modifying production infrastructure, reducing the risk of unexpected outages or misconfigurations that could impact business operations across collaborative development environments where multiple engineers contribute to infrastructure codebases.

Understanding Terraform State Management Fundamentals

State management represents one of Terraform's most critical yet often misunderstood concepts. The state file serves as Terraform's memory, tracking which real-world resources correspond to configuration definitions. Without accurate state information, Terraform cannot determine what actions to take when applying configuration changes. This file contains sensitive information about infrastructure, including resource identifiers, IP addresses, and sometimes credentials, requiring careful security considerations.

Local state storage works adequately for individual practitioners learning Terraform basics, but team environments require remote state backends that enable collaboration and prevent concurrent modification conflicts. Remote backends like Terraform Cloud, AWS S3 with DynamoDB locking, or Azure Storage provide state locking mechanisms that prevent multiple users from simultaneously applying changes. Proper state management directly impacts security transformation initiatives by ensuring infrastructure remains in known, auditable configurations and enabling rapid detection of unauthorized changes through state comparison mechanisms.

Provider Architecture and Multi-Cloud Capabilities

Terraform's provider system enables interaction with virtually any API-driven service through a plugin architecture. Each provider translates Terraform's configuration language into API calls specific to the target platform. The Associate 003 exam extensively covers provider configuration, including authentication methods, version constraints, and alias usage for managing multiple instances of the same provider within a single configuration. Multi-cloud strategies have become increasingly common as organizations seek to avoid vendor lock-in and leverage best-of-breed services from different platforms.

Terraform excels in this domain by providing a consistent workflow regardless of the underlying provider. A single configuration might provision compute resources in AWS, DNS records in Cloudflare, and monitoring dashboards in Datadog, all orchestrated through the same terraform apply command. This unification simplifies operations and reduces the cognitive load on infrastructure teams managing heterogeneous environments, particularly when implementing comprehensive security assessments that span multiple cloud providers and on-premises systems.

Resource Blocks and Configuration Syntax Essentials

Resource blocks form the building blocks of Terraform configurations, with each block describing one or more infrastructure objects. The syntax follows a consistent pattern: the resource keyword, followed by the resource type, resource name, and a configuration block containing arguments specific to that resource type. Understanding this structure is fundamental for the Associate 003 certification, as exam questions frequently test the ability to identify correctly formatted resource blocks and troubleshoot syntax errors.

Arguments within resource blocks can be literal values, references to other resources, or expressions that compute values dynamically. The depends_on meta-argument allows explicit dependency declaration when Terraform cannot infer resource relationships automatically. The count and for_each meta-arguments enable resource multiplication, creating multiple similar resources from a single block definition. Mastering these concepts enables practitioners to write concise, maintainable configurations that scale efficiently across different certification domains while maintaining readability and supporting team collaboration on complex infrastructure projects.

Variables and Outputs for Configuration Flexibility

Input variables make Terraform configurations reusable across different environments by parameterizing values that change between deployments. Rather than hardcoding values like instance sizes or region names, practitioners define variables with type constraints, default values, and descriptions. Variable values can be supplied through command-line flags, environment variables, terraform.tfvars files, or interactive prompts, providing flexibility in how configurations are executed.

Output values extract information from the infrastructure for use in other configurations or for display to operators. Outputs become particularly valuable in module-based architectures, where one module's outputs serve as inputs to another module. The Associate 003 exam tests understanding of variable validation, output sensitive marking, and the proper use of locals for intermediate value computation. These concepts mirror principles found in fundamental security certifications where configuration management and change control processes require careful parameter validation and documentation.

Modules for Code Organization and Reusability

Modules represent the primary method for organizing and packaging Terraform code for reuse. A module is simply a directory containing Terraform configuration files that can be called from other configurations. The root module refers to the configuration in the current working directory, while child modules are called using module blocks that specify the source location and input variables. Module sources can be local file paths, Git repositories, Terraform Registry URLs, or HTTP URLs pointing to packaged configurations.

The Terraform Registry hosts thousands of community-maintained modules for common infrastructure patterns, allowing teams to leverage proven configurations rather than building everything from scratch. Versioning modules through Git tags or Registry versions enables controlled updates and prevents breaking changes from unexpectedly affecting existing infrastructure, supporting the systematic approach emphasized in core security frameworks where change management and version control are critical components.

Data Sources for External Information Integration

Data sources enable Terraform configurations to fetch information from external systems or from infrastructure managed outside the current configuration. Unlike resources that create and manage infrastructure objects, data sources are read-only and simply retrieve existing information. Common use cases include looking up AMI IDs, fetching IP address ranges for firewall rules, or retrieving information about existing VPCs that the configuration should integrate with.

The data block syntax resembles resource blocks but uses the data keyword instead. Each data source has provider-specific arguments that filter or specify which information to retrieve. Data sources support depends_on to control when they refresh, though Terraform automatically infers most dependencies. Understanding when to use data sources versus hardcoded values improves configuration maintainability and reduces errors when infrastructure details change, principles that align with systematic troubleshooting methodologies used across IT operations and infrastructure management disciplines.

Terraform Cloud and Enterprise Features Overview

Terraform Cloud provides a hosted environment for running Terraform operations with additional collaboration features, remote state management, and policy enforcement capabilities. The Associate 003 exam covers Terraform Cloud's free tier features, including workspaces, VCS integration, and remote execution. Organizations using Terraform Cloud benefit from consistent execution environments, detailed run logs, and automated application workflows triggered by repository changes. Workspace-based organization allows teams to manage multiple environments or configurations within a single Terraform Cloud account.

Each workspace maintains its own state, variables, and settings, preventing accidental cross-environment changes. Sentinel policy as code enables governance teams to enforce organizational standards, such as requiring specific tags on all resources or prohibiting certain resource types. These enterprise features bring infrastructure provisioning into compliance frameworks similar to those tested in offensive security scenarios where policy enforcement and audit trails are essential components.

Configuration Best Practices and Style Conventions

Consistent formatting and naming conventions make Terraform configurations more readable and maintainable across teams. The terraform fmt command automatically reformats configuration files to match HashiCorp's style guide, which specifies indentation, spacing, and argument ordering. Running this command before committing changes ensures that version control diffs highlight meaningful changes rather than whitespace variations. Resource and variable naming should follow consistent patterns that clearly communicate purpose and scope.

Using snake_case for resource names, descriptive variable names, and meaningful tags improves configuration understandability. Comments should explain why decisions were made rather than what the code does, as properly written Terraform is largely self-documenting. These practices parallel the optimization strategies employed in penetration testing workflows where clear documentation and systematic approaches reduce errors and improve collaboration among security professionals.

Version Constraints and Provider Locking Mechanisms

Terraform configurations should specify version constraints for both Terraform itself and the providers used. The required_version argument in the terraform block ensures that only compatible Terraform versions can execute the configuration, preventing issues caused by version-specific syntax or behavior changes. Provider version constraints prevent unexpected breaking changes when provider authors release new versions with incompatible changes.

The dependency lock file (.terraform.lock.hcl) records the exact provider versions selected during initialization, ensuring consistent behavior across different execution environments. This file should be committed to version control so that all team members and CI/CD pipelines use identical provider versions. Version pinning strategies balance stability against the need to receive bug fixes and security updates, requiring periodic review and intentional updates rather than automatic acceptance of every new release, a discipline emphasized in SOC analyst certifications where controlled change management prevents security incidents.

Common Terraform Commands and CLI Operations

The Terraform CLI provides numerous commands beyond the core workflow operations. The terraform validate command checks configuration syntax without accessing remote state or provider APIs, enabling fast feedback during development. The terraform show command displays the current state or a saved plan in human-readable format, useful for reviewing infrastructure status or verifying planned changes before approval.

The terraform taint command marks a resource for recreation during the next apply, useful when a resource has entered a degraded state that Terraform cannot detect through normal attribute comparison. The terraform import command brings existing infrastructure under Terraform management by adding it to the state file, though it requires manually writing the corresponding configuration. Understanding these commands and their appropriate use cases forms a significant portion of the Associate 003 exam content, testing practical knowledge that professionals apply when positioning themselves strategically in infrastructure engineering roles.

Resource Lifecycle Management and Meta-Arguments

Resource lifecycle meta-arguments provide fine-grained control over how Terraform creates, updates, and destroys resources. The create_before_destroy argument instructs Terraform to create replacement resources before destroying the old ones, preventing service interruptions during updates that require recreation. This proves particularly valuable for resources like load balancer target groups or DNS records where maintaining continuous availability is critical.

The prevent_destroy lifecycle argument adds a safety check that causes Terraform to reject any plan that would destroy the protected resource, preventing accidental deletion of critical infrastructure like databases or DNS zones. The ignore_changes argument tells Terraform to ignore specific attribute changes, useful when external systems modify resource attributes that should not trigger Terraform updates. These lifecycle controls mirror the access management principles covered in CISSP domain studies where controlled change processes protect critical assets from unauthorized or accidental modification.

Debugging and Troubleshooting Terraform Configurations

Effective debugging starts with understanding Terraform's verbose logging capabilities. Setting the TF_LOG environment variable to TRACE, DEBUG, INFO, WARN, or ERROR controls the verbosity of Terraform's output, with TRACE providing the most detailed information including all provider API calls. The TF_LOG_PATH variable directs this output to a file rather than standard error, enabling analysis of long-running operations or automation logs. Common configuration errors include circular dependencies, where resources reference each other in ways that prevent Terraform from determining a valid execution order.

The terraform graph command generates a visual representation of resource dependencies, helping identify these cycles. Type mismatches between variable definitions and supplied values cause frequent errors, as do attempts to reference resources that do not yet exist or have been removed from the configuration. Systematic debugging approaches align with methodologies used in vulnerability disclosure programs where thorough analysis and documentation of issues leads to effective remediation.

Terraform Registry and Community Resources

The Terraform Registry serves as a central repository for modules and providers maintained by HashiCorp, cloud vendors, and the community. Verified modules indicate that HashiCorp has confirmed the publisher's identity and that the module follows certain quality standards. Organizations can publish private modules to the Registry for internal use, facilitating standardization across teams while maintaining confidentiality of proprietary configurations. Browsing the Registry helps practitioners learn Terraform patterns and avoid reinventing solutions to common problems.

Each module's documentation includes usage examples, input variable descriptions, and output value definitions. The Registry also hosts provider documentation, which serves as the primary reference for available resource types and their arguments. Leveraging these resources accelerates development and reduces errors, similar to how cloud certification programs provide structured learning paths that build expertise more efficiently than unguided exploration.

Workspace Management for Environment Separation

Terraform workspaces provide a mechanism for managing multiple environments within a single configuration codebase. Each workspace maintains its own state file, allowing practitioners to use identical configurations for development, staging, and production environments while keeping the infrastructure completely separate. The terraform workspace command manages workspace creation, selection, and deletion. While workspaces work well for simple environment separation, complex scenarios may require separate configurations or sophisticated variable management to handle environment-specific differences.

The current workspace name is available through the terraform.workspace expression, enabling conditional logic that adjusts resource configurations based on the active workspace. However, overusing workspace-conditional logic can reduce configuration readability and increase complexity, suggesting that separate configurations may be more appropriate for environments with significant differences in architecture or resource requirements that mirror the analytical approaches taught in AI research tools and modern data analysis platforms.

State File Security and Sensitive Data Handling

Terraform state files contain comprehensive information about managed infrastructure, including resource identifiers, attribute values, and sometimes credentials or encryption keys. Protecting state files from unauthorized access is critical for maintaining infrastructure security. Remote state backends should enforce encryption at rest and in transit, with access controls that limit who can read or modify state data. Marking outputs as sensitive prevents Terraform from displaying their values in CLI output, though they remain visible in the state file.

For truly sensitive data like passwords or API keys, organizations should use dedicated secret management systems like HashiCorp Vault and reference those secrets in Terraform configurations rather than storing them directly. The ignore_changes lifecycle argument can prevent Terraform from overwriting externally rotated credentials, though this requires careful coordination between Terraform and other management systems to avoid configuration drift across advertising campaign structures and complex multi-component deployments.

Preparing for the HashiCorp Terraform Associate 003 Exam

The Associate 003 certification exam consists of multiple-choice and multiple-select questions covering all aspects of Terraform's core workflow, syntax, and concepts. Successful candidates typically combine hands-on practice with study of official documentation and exam objectives. Building real infrastructure across multiple providers deepens understanding beyond what reading alone can achieve, as practical experience reveals subtle behaviors and edge cases not always evident in documentation. HashiCorp provides a detailed exam review guide outlining the topics covered and their relative weighting.

The exam emphasizes practical knowledge over memorization, testing the ability to read and understand configurations, identify errors, and select appropriate approaches for given scenarios. Practice environments using free-tier cloud resources allow exam preparation without significant expense, while hands-on experience with different providers and configuration patterns builds the comprehensive knowledge required for certification success in domains ranging from scalable data intelligence to multi-cloud infrastructure orchestration and management.

Advanced State Management Techniques and Strategies

State management complexity increases significantly as infrastructure scales beyond simple configurations. State locking prevents concurrent modifications, but long-running operations can hold locks and block other team members from applying changes. Understanding lock timeout configurations and using the -lock-timeout flag enables teams to handle transient lock conflicts without manual intervention, though persistent locks may indicate stuck processes that require investigation and manual release.

State file migration between backends occasionally becomes necessary when organizations change their infrastructure management strategies or consolidate multiple Terraform configurations. The terraform state commands provide low-level state manipulation capabilities, including moving resources between state files, removing resources from management, and listing current state contents. These operations require careful execution as incorrect state manipulation can leave infrastructure in inconsistent states that are difficult to repair, particularly when managing cloud transition foundations that involve complex dependencies across multiple services and providers.

Dynamic Blocks for Repeating Configuration Patterns

Dynamic blocks generate repeated nested blocks within resources or modules based on collections of data. This feature proves particularly useful when a resource type requires multiple similar configuration blocks, such as ingress rules in security groups or routing rules in load balancers. The dynamic keyword introduces a dynamic block, with for_each specifying the collection to iterate over and content defining the structure of each generated block. The iterator argument allows customization of the temporary variable name used within the dynamic block's content, though the default name matches the dynamic block label.

Dynamic blocks can be nested to handle complex hierarchical configurations, though excessive nesting reduces readability. Understanding when dynamic blocks improve configurations versus when they introduce unnecessary complexity represents an important skill for maintaining codebases that remain comprehensible as they grow, similar to architectural decisions made when implementing platform computing foundations that must balance flexibility with operational simplicity.

Conditional Expressions and Resource Creation Logic

Conditional expressions using the ternary operator enable configurations to adapt based on input variables or external conditions. The syntax condition ? true_value : false_value evaluates the condition and returns one of two values based on the result. This capability supports environment-specific configurations without duplicating code, such as selecting different instance types for development versus production environments. Count and for_each meta-arguments leverage conditional logic to create resources conditionally.

Setting count to 0 prevents resource creation, while setting it to 1 creates the resource, enabling toggling of optional infrastructure components through boolean variables. The for_each approach provides more flexibility by creating resources based on map or set contents, with each.key and each.value providing access to iteration data. These techniques enable sophisticated infrastructure patterns that adapt to changing requirements while maintaining a single source of configuration truth across diverse vendor ecosystems including specialized storage systems and traditional enterprise platforms.

Function Usage in Terraform Configurations

Terraform provides dozens of built-in functions for string manipulation, numeric computation, collection transformation, and data encoding. The file function reads file contents into configurations, useful for embedding scripts or templates. The jsonencode and yamlencode functions convert Terraform data structures into JSON or YAML strings, while their decode counterparts parse structured data from strings. Collection functions like merge, concat, and flatten combine or restructure complex data types.

The lookup function retrieves values from maps with default fallbacks, preventing errors when expected keys are absent. Type conversion functions ensure that values match expected types, particularly important when working with variables of type any. The templatefile function combines file reading with variable interpolation, enabling sophisticated template-based configuration generation for scenarios spanning from enterprise server infrastructure to cloud-native application deployments.

Provisioners and Their Limited Use Cases

Provisioners execute scripts or commands on local or remote machines as part of Terraform's resource lifecycle. While provisioners enable actions like running configuration management tools or executing initialization scripts, HashiCorp recommends using them sparingly as they break Terraform's declarative model and introduce procedural logic that complicates state management and error recovery. The local-exec provisioner runs commands on the machine executing Terraform, useful for triggering external systems or generating local artifacts.

The remote-exec provisioner executes commands on newly created resources via SSH or WinRM, though this requires network connectivity and credentials that may not be available during provisioning. Connection blocks configure authentication and connectivity details for remote provisioners, supporting password and key-based authentication across different operating systems and network configurations that may include backup solutions or disaster recovery systems.

Null Resources for Trigger-Based Operations

Null resources provide a mechanism for executing provisioners without creating actual infrastructure resources. These prove useful for running scripts or triggering external systems as part of Terraform operations when those actions do not correspond to any specific infrastructure resource. The null_resource accepts triggers, a map of values that cause the resource to be replaced when any value changes, enabling re-execution of associated provisioners.

Null resources with local-exec provisioners can integrate Terraform with external automation systems, configuration management tools, or custom scripts that handle aspects of infrastructure provisioning outside Terraform's scope. However, reliance on null resources often indicates opportunities to find more declarative solutions through proper resource types or external data sources that better align with Terraform's design philosophy while maintaining compatibility with automation deployment options and operational procedures.

Terraform Import and Existing Infrastructure Integration

The terraform import command brings existing infrastructure under Terraform management by adding resources to the state file. Import requires specifying the resource address in the configuration and the resource's cloud provider identifier. After import, practitioners must manually write configuration matching the imported resource's current settings, as Terraform does not generate configuration from existing infrastructure automatically.

Importing large amounts of existing infrastructure can be tedious and error-prone. Third-party tools have emerged to assist with bulk imports and configuration generation, though they require validation to ensure accuracy. Organizations transitioning to Terraform often phase imports, bringing critical or frequently modified infrastructure under management first while deferring stable resources. This incremental approach reduces risk and allows teams to build expertise before tackling complex imports involving interconnected resources across storage management platforms and legacy systems.

Backend Configuration for State Storage Options

Terraform supports numerous backend types for state storage, each with different capabilities and operational characteristics. The local backend stores state in a file on the local filesystem, suitable only for individual learning or temporary configurations. The remote backend integrates with Terraform Cloud or Terraform Enterprise, providing state locking, versioning, and team collaboration features in a fully managed environment.

Cloud provider backends like s3, azurerm, and gcs leverage object storage services with separate locking mechanisms through DynamoDB tables, storage account leases, or Cloud Storage locks. The http backend allows custom state storage implementations through any HTTP service that implements the required API. Partial backend configuration using the -backend-config flag enables separation of environment-specific settings from version-controlled configurations, supporting secure credential management across cluster storage systems and multi-tenant deployments.

Move and Refactoring Operations in Terraform

The moved block enables refactoring Terraform configurations without destroying and recreating resources. When resource addresses change due to reorganization or module restructuring, moved blocks inform Terraform that a resource has relocated in the configuration rather than being replaced. This preserves existing infrastructure while allowing configuration improvements that would otherwise trigger destructive changes.

The terraform state mv command provides an alternative approach for moving resources between state files or changing resource addresses directly in state. This command proves useful for splitting monolithic configurations into smaller modules or consolidating related resources. Both approaches require careful execution and testing, as mistakes can leave state in inconsistent conditions that require manual repair through direct state file editing, though proper version control and state backups mitigate these risks across backup infrastructure platforms and production systems.

Sentinel Policy as Code for Governance

Sentinel enables policy as code for Terraform Cloud and Enterprise users, allowing organizations to enforce rules about infrastructure configurations before they are applied. Policies can mandate specific tags on all resources, prevent creation of publicly accessible storage buckets, require encryption for databases, or enforce cost controls by limiting instance types or quantities. Sentinel policies run during the plan phase, evaluating proposed changes against organizational requirements.

Policies are written in Sentinel's policy language, which provides access to the planned resource changes, configuration, and state data. Organizations typically categorize policies by severity: advisory policies that warn but allow application, soft-mandatory policies that can be overridden with proper authorization, and hard-mandatory policies that prevent application without exception. Policy libraries shared across teams ensure consistent governance while allowing customization for specific workspaces or projects that may integrate with archive management systems or specialized compliance frameworks.

Testing Strategies for Terraform Configurations

Testing Terraform configurations ensures that infrastructure code behaves as expected before applying it to production environments. The terraform validate command provides basic syntax checking, while terraform plan in a non-production environment reveals how configurations will behave against real APIs without making actual changes. Automated testing tools like Terratest enable integration testing that actually creates infrastructure, runs validation checks, and destroys the test resources.

Unit testing individual modules in isolation verifies their behavior across different input combinations. Integration testing validates that modules work together correctly and produce the expected infrastructure topology. End-to-end testing confirms that the complete infrastructure stack supports the intended applications and services. Continuous integration pipelines that run these tests on every configuration change catch errors early and prevent problematic configurations from reaching production, particularly important when managing scalable storage foundations and critical business systems.

Terraform Graph and Dependency Visualization

The terraform graph command generates a visual representation of resource dependencies in DOT format, which can be rendered using GraphViz tools. This visualization helps understand the order in which Terraform will create, update, or destroy resources during apply operations. Complex configurations with many interdependent resources benefit from graph visualization during troubleshooting or architecture documentation efforts. Dependency graphs reveal implicit dependencies derived from resource references and explicit dependencies declared through depends_on meta-arguments.

Circular dependencies appear as cycles in the graph, which Terraform cannot resolve and will report as errors. Understanding dependency relationships proves crucial for optimizing configuration performance, as Terraform parallelizes operations for resources without dependencies while ensuring that dependent resources wait for their prerequisites to complete across various platforms including backup operations and complex infrastructure stacks.

Replacing Resources and Targeted Operations

The -replace flag (formerly terraform taint) marks specific resources for recreation during the next apply, useful when resources enter degraded states that Terraform cannot detect through normal attribute comparison. This proves valuable when application-level issues require complete resource recreation rather than in-place updates, or when manual changes to resources have created drift that cannot be corrected through normal attribute updates. Targeted applies using the -target flag limit operations to specific resources and their dependencies, enabling surgical infrastructure changes without affecting unrelated components.

While useful during development or emergency remediation, targeted applies should be avoided in normal operations as they can create incomplete infrastructure states where some resources match configuration while others remain outdated. Both replacement and targeting operations require understanding of resource dependencies and potential ripple effects throughout the infrastructure, particularly when coordinating changes across storage foundation platforms and distributed systems.

Terraform CLI Configuration and Environment Variables

The Terraform CLI supports configuration through the .terraformrc or terraform.rc file, controlling behaviors like provider installation sources, plugin cache locations, and credential helpers. Organizations operating in air-gapped environments configure provider network mirrors to enable plugin downloads without internet access. The plugin_cache_dir setting prevents repeated provider downloads across multiple configurations, reducing bandwidth usage and initialization time.

Environment variables prefixed with TF_VAR_ automatically populate Terraform variables, enabling injection of configuration values from CI/CD systems or shell environments without command-line flags or variable files. The TF_CLI_ARGS environment variable sets default CLI arguments, useful for consistently applying certain flags across all Terraform invocations. Understanding these configuration mechanisms enables tailored Terraform behaviors that align with organizational standards and operational requirements spanning from cloud platform integration to hybrid infrastructure deployments.

Understanding Terraform Refresh and Drift Detection

Terraform refresh updates the state file with current resource attributes from provider APIs, identifying differences between actual infrastructure and Terraform's state representation. Refresh occurs automatically during plan and apply operations, ensuring that Terraform makes decisions based on current infrastructure state rather than potentially stale information. Manual refresh through terraform refresh provides a way to update state without planning or applying any changes.

Drift detection identifies when infrastructure has been modified outside of Terraform's control, whether through manual changes via cloud consoles, other automation tools, or external processes. When drift occurs, Terraform's next plan shows the necessary changes to bring infrastructure back to the desired state defined in configuration. Organizations implement different drift management strategies, from automatic remediation that reverts manual changes to alert-only approaches that notify teams of drift for manual investigation across platforms including blockchain deployment tools and specialized infrastructure.

Remote Execution and Terraform Cloud Workflows

Remote execution runs Terraform operations in Terraform Cloud or Enterprise rather than on local workstations, providing consistent execution environments, detailed logs, and integration with VCS repositories. When VCS integration is configured, Terraform Cloud automatically plans infrastructure changes when pull requests are created or commits are pushed to specified branches, enabling infrastructure code review before merging and application while aligning with security assessment tools that help identify configuration risks early in the deployment lifecycle.

Speculative plans on pull requests show the infrastructure changes that would result from merging the code, allowing reviewers to evaluate both the code itself and its operational impact. Auto-apply settings enable fully automated infrastructure deployments when plans show no errors, though many organizations prefer manual approval steps for production changes. Remote execution also facilitates secure handling of cloud credentials, as they are stored in Terraform Cloud rather than distributed to individual engineer workstations, reducing credential exposure across different operational contexts.

Performance Optimization in Large Terraform Configurations

Large Terraform configurations with hundreds or thousands of resources face performance challenges during plan and apply operations. The -parallelism flag controls how many concurrent operations Terraform executes, with the default value of 10 suitable for most scenarios but potentially increasing when managing large resource counts with robust provider APIs. However, excessive parallelism can trigger API rate limits or overwhelm provider endpoints, requiring tuning based on specific provider characteristics.

Breaking monolithic configurations into smaller, focused configurations improves performance and reduces blast radius when changes fail. Each configuration maintains its own state file and can be planned and applied independently, enabling parallel development across teams. Data sources enable these separate configurations to reference outputs from each other, maintaining the necessary connections while allowing independent evolution, particularly valuable when managing design deployment scenarios that involve multiple infrastructure layers and components.

Resource Targeting and Selective Application Strategies

While generally discouraged, resource targeting through -target flags serves specific purposes in exceptional circumstances. During incident response, targeting enables quick fixes to specific broken resources without waiting for full configuration validation. When debugging complex dependency chains, targeting helps isolate which resource relationships are causing errors by applying only specific subsets of the configuration.

Development workflows sometimes use targeting to iterate quickly on specific resources without repeatedly creating and destroying unrelated infrastructure. However, this creates state inconsistencies where some resources match configuration while others do not, requiring eventual full applies to restore consistency. Organizations that permit targeted applies typically document when and how they should be used, establishing guardrails that prevent their misuse while acknowledging their value in certain situations across deployment scenarios including datacenter configuration management and complex modernization projects.

Terraform Registry Module Versioning Best Practices

Consuming modules from the Terraform Registry requires version constraints that balance stability with access to improvements. Exact version constraints like version = "1.2.3" provide maximum predictability but prevent automatic updates even for bug fixes. Pessimistic constraints like version = "~> 1.2" allow patch version updates while preventing minor version changes that might introduce new features or minor breaking changes.

Module publishers should follow semantic versioning, incrementing major versions for breaking changes, minor versions for backward-compatible features, and patch versions for bug fixes. Module changelogs help consumers understand what changed between versions and assess update risk. Organizations often maintain internal module registries with curated, tested versions approved for production use, preventing teams from directly consuming untested community modules in critical infrastructure deployments across diverse environments including skill assessment platforms and enterprise systems.

State Locking Mechanisms Across Different Backends

State locking implementation varies significantly across backend types, affecting operational characteristics and failure modes. The S3 backend uses DynamoDB tables for locking, with each state file having a corresponding DynamoDB item that tracks lock ownership. Lock acquisition failures typically indicate that another process holds the lock, though stuck locks from crashed processes occasionally require manual intervention to clear.

The azurerm backend uses blob leases for locking, automatically released when the locking process terminates or after a configurable timeout. The GCS backend implements locking through Cloud Storage object metadata, with similar characteristics to the Azure approach. Understanding these implementation details helps troubleshoot locking issues and select appropriate backends for specific operational requirements, especially when managing infrastructure with varying reliability needs and coordinating across subscription management systems that handle different billing cycles.

Strategies for Managing Terraform State File Size Growth

State files grow as infrastructure scales, eventually impacting performance and increasing state operation time. Splitting large configurations into multiple smaller ones with separate state files addresses this growth, though it requires careful planning around resource organization and dependencies. Removing decommissioned resources from state rather than leaving orphaned entries prevents unnecessary state file bloat from historical resources no longer managed. The terraform state rm command removes resources from state without destroying them, useful when transferring management to different Terraform configurations or permanently removing resources from Terraform management while preserving the actual infrastructure.

Regular state file size monitoring helps detect excessive growth before it impacts operations. Very large state files may indicate architectural issues where a single Terraform configuration manages too much infrastructure, suggesting a need for better organizational boundaries that align with team structures or system architectures maintained through annual maintenance contracts and operational agreements.

Handling Provider Credential Management Securely

Provider credentials grant Terraform access to create, modify, and delete infrastructure, requiring careful security handling. Hard-coding credentials in configuration files or variable values creates security risks as those files are typically committed to version control. Environment variables provide better security by keeping credentials out of files, though they must be set consistently across all execution environments including developer workstations and CI/CD systems.

Cloud provider credential chains offer superior security by leveraging instance profiles, managed identities, or workload identity federation that eliminate static credentials entirely. Terraform Cloud workspace variables marked as sensitive provide encrypted storage for credentials that remain hidden from configuration and state files. Organizations implement credential rotation policies and audit credential access, treating Terraform credentials with the same rigor as production application credentials across infrastructure including comprehensive resource packages and bundled service offerings.

Terraform Configuration Testing with Sentinel and OPA

Beyond Sentinel's policy as code capabilities in Terraform Cloud, Open Policy Agent (OPA) provides an open-source alternative for policy enforcement. OPA policies written in Rego evaluate Terraform plans in JSON format, enabling organizations to implement governance without vendor lock-in. The terraform show -json command exports plans in the format OPA expects, facilitating integration with existing OPA infrastructure. Policy testing ensures that governance rules work correctly and do not block legitimate infrastructure changes.

Sentinel and OPA both support policy unit testing with test cases that verify policies correctly, allow compliant configurations and block non-compliant ones. Continuous integration pipelines run policy tests alongside Terraform validation, ensuring that policy updates do not inadvertently break existing workflows. Organizations balance governance strictness against operational agility, implementing policies that enforce security and compliance requirements while avoiding overly restrictive rules that impede legitimate work in scenarios spanning quarterly planning periods and project cycles.

Terraform Enterprise Air-Gapped Installation Considerations

Organizations with strict security requirements often operate air-gapped environments without internet connectivity, requiring special Terraform Enterprise deployment considerations. Air-gapped installations need provider and module mirrors on the local network, requiring processes to regularly download updates from the public Terraform Registry and transfer them to the isolated environment through approved channels. Custom provider development becomes more common in air-gapped environments when organizations need to integrate with internal systems that do not have public providers.

The Terraform Plugin SDK facilitates custom provider creation, though it requires ongoing maintenance as Terraform's provider protocol evolves. Documentation and training materials must also be hosted internally since practitioners cannot access online resources during development and troubleshooting activities across infrastructure supporting biannual operational reviews and compliance audits.

Understanding Terraform Destroy and Resource Cleanup

The terraform destroy command removes all resources defined in the configuration, providing a clean teardown mechanism for temporary environments or decommissioned infrastructure. The destroy operation follows the inverse dependency graph, deleting resources in the opposite order of creation to respect relationships and prevent failures from deleting dependencies before dependent resources. Destroy operations can be partially targeted using -target flags, though this creates incomplete infrastructure states where some resources remain while others are deleted.

The prevent_destroy lifecycle argument provides protection against accidental deletion, causing Terraform to reject destroy operations affecting protected resources. Organizations implement approval processes for destroy operations in production environments, recognizing their potentially catastrophic impact if executed accidentally or maliciously across infrastructure managed under subscription handling procedures and service agreements.

Pre-Release Terraform Version Testing Strategies

HashiCorp releases Terraform alpha and beta versions before general availability, allowing early adopters to test new features and report issues. Organizations running pre-release versions should limit their use to non-production environments and maintain stable version deployments for critical infrastructure. Pre-release testing helps identify compatibility issues with existing configurations before upgrading production systems.

The required_version constraint prevents accidental use of pre-release versions in configurations intended for stable releases, though it can be relaxed during testing phases. Organizations maintain separate test environments that mirror production architecture but use pre-release Terraform versions, identifying issues without risking production stability. Feedback provided during pre-release periods influences final release quality, making early adoption valuable for organizations that can tolerate the risks involved in running unfinalized software across test systems including pre-order infrastructure deployments and beta platforms.

Terraform Cloud Run Triggers and Automation

Run triggers enable automation where changes to one workspace automatically trigger plans or applies in dependent workspaces, orchestrating multi-configuration deployments. For example, changes to a shared networking configuration might trigger runs in all application workspaces that consume those network outputs, ensuring dependent infrastructure updates automatically when upstream changes occur. Trigger configuration specifies whether to automatically apply changes or only create plans for manual review, balancing automation efficiency against change control requirements.

Organizations use run triggers to implement infrastructure deployment pipelines where changes flow through development, staging, and production workspaces in controlled sequences. Notification integrations provide visibility into trigger-initiated runs, ensuring that automated changes remain visible to responsible teams even when triggered without direct human intervention across various operational models including extension management systems and service expansions.

Complex Variable Types and Validation Rules

Terraform supports complex variable types including lists, maps, objects, and tuples that model sophisticated data structures. Object types define variables with multiple typed attributes, useful for representing complex infrastructure components that have numerous configuration parameters. Tuple types specify exact element counts and per-element types for ordered collections, providing stricter validation than simple lists. Custom validation rules defined in variable blocks enable configuration-specific constraints beyond basic type checking.

Validation rules access the variable value and return error messages when constraints are violated, preventing invalid configurations from being applied. Validation expressions can reference other variables through the var namespace, enabling cross-variable validation that ensures related configuration parameters maintain consistent relationships across infrastructure deployments supporting certification preparatory resources and learning platforms.

Terraform Module Composition Patterns

Module composition creates sophisticated infrastructure patterns by combining smaller, focused modules into larger systems. The root module calls multiple child modules, each managing a specific infrastructure component or capability. Modules can call other modules, creating hierarchical compositions where high-level modules abstract complex underlying details from configuration consumers. Module outputs from lower-level modules feed into input variables of higher-level modules, creating data flow through the composition.

Organizations develop module libraries representing standard infrastructure patterns, enabling rapid deployment of complex systems from well-tested components. Module version constraints at each composition level ensure compatibility across the module hierarchy, preventing breaking changes from propagating through the system when individual modules are updated in environments ranging from enterprise architecture frameworks to specialized deployment scenarios.

Terraform Enterprise Clustering and High Availability

Terraform Enterprise supports clustered deployments for high availability and horizontal scaling, distributing load across multiple application servers that share a common database and object storage backend. Redis provides distributed caching and coordination across cluster members, while PostgreSQL stores workspace metadata, run history, and configuration data that persists across application server restarts or failures.

Load balancers distribute traffic across cluster members, providing a single endpoint for users and API clients while enabling rolling updates without downtime. Object storage backends like S3 or Azure Blob Storage host Terraform state files, provider binaries, and run logs, with replication providing additional redundancy. Organizations size clusters based on workspace counts, concurrent run requirements, and desired resiliency levels, scaling infrastructure as Terraform usage grows across the organization and extending to various architectural governance models and compliance frameworks.

Terraform Automation and CI/CD Integration Patterns

Continuous integration and deployment pipelines automate Terraform operations, running plans on pull requests and applying changes when code merges to main branches. Pipeline jobs execute terraform init, plan, and apply commands, capturing outputs and enforcing quality gates that prevent problematic configurations from reaching production. Approval steps between plan and apply enable human review of automated infrastructure changes.

Pipeline integration requires careful credential management since automation systems need provider access. Service accounts with appropriate permissions and credential vaulting prevent exposure of sensitive authentication material in pipeline logs or artifacts. Organizations implement drift detection jobs that periodically plan infrastructure and alert when manual changes have occurred, enabling prompt investigation and remediation of unexpected configuration differences across complex deployments supporting standardized frameworks and enterprise governance requirements.

Conclusion:

The path to Terraform expertise extends far beyond certification achievement, representing a fundamental shift in how professionals conceptualize and manage infrastructure. Explored the comprehensive knowledge domains required for the HashiCorp Terraform Associate 003 certification while emphasizing the practical application of these concepts in real-world scenarios. From foundational workflow understanding through advanced state management and enterprise deployment patterns, mastery requires continuous hands-on practice combined with theoretical grounding in infrastructure as code principles.

The certification validates core competencies that organizations increasingly demand as infrastructure complexity grows and multi-cloud strategies become standard rather than exceptional. Practitioners who achieve this certification demonstrate their ability to implement reproducible infrastructure deployments, manage configuration complexity through modular design, and collaborate effectively within teams using version-controlled infrastructure definitions. These skills translate directly into operational value, reducing deployment times, minimizing configuration drift, and improving infrastructure reliability across diverse technology ecosystems.

Modern infrastructure management demands more than technical proficiency with specific tools. The principles underlying Terraform—declarative configuration, immutable infrastructure, and API-driven resource management—represent a broader paradigm shift that influences how organizations approach technology operations. Successful practitioners understand these underlying concepts and can apply them across different tools and platforms as technology landscapes evolve. The Associate 003 certification provides a foundation upon which to build deeper expertise in specialized domains, whether focusing on specific cloud platforms, advancing to Terraform professional certifications, or expanding into adjacent infrastructure automation technologies.

Organizations benefit substantially from team members who possess verified Terraform expertise. Infrastructure as code reduces manual effort, improves consistency, and enables sophisticated disaster recovery and business continuity capabilities through reproducible deployments. Teams working with standardized Terraform practices collaborate more effectively, as configuration files serve as documentation, communication artifacts, and executable specifications simultaneously. The reduced context switching between infrastructure management and other responsibilities enables engineers to focus on higher-value architecture and optimization work rather than repetitive provisioning tasks.

The examination process itself teaches valuable lessons about systematic preparation and knowledge validation. Candidates who approach certification strategically—combining structured study with deliberate practice—develop habits that serve them throughout their careers. The ability to break complex topics into learnable components, verify understanding through practical application, and persist through challenging material creates professional capabilities extending far beyond Terraform itself. These meta-skills prove invaluable across all technical domains and career stages.

Looking forward, infrastructure automation will only increase in importance as organizations manage increasingly distributed, complex technology estates. Kubernetes, serverless platforms, edge computing, and emerging technologies all require sophisticated orchestration that infrastructure as code uniquely provides. Terraform's provider ecosystem continues expanding, encompassing not just infrastructure resources but also SaaS applications, monitoring tools, and identity systems. Professionals with strong Terraform foundations position themselves advantageously for these evolving requirements, possessing transferable skills that remain relevant even as specific technologies change.